(Integrated Artificial Hyper-Collective Hybridization Framework)

1. Executive Overview

The IAHC Hybridization Platform represents a high-level strategic vertical within the Maitreya ecosystem designed to formalize research, development, and deployment of advanced human–AI cognitive integration systems.

The objective is not speculative “consciousness transfer,” but rather the structured development of:

- Neuroadaptive AI augmentation frameworks

- Human-in-the-loop intelligence amplification systems

- Predictive cognitive enhancement models

- Bio-digital interface architectures

- Collective intelligence optimization networks

This vertical focuses on measurable augmentation, safety-aligned integration, and scalable deployment architectures across education, medicine, defense, research, and enterprise systems.

2. Foundational Concept

Core Thesis

Intelligence amplification is maximized not through autonomous AI dominance, but through structured bidirectional integration between human cognitive systems and adaptive artificial intelligence frameworks.

The base concept of the IAHC vertical is:

Intelligence = Recursive system optimization capacity across biological and digital substrates.

This vertical formalizes the integration model as:

Human Cognitive System (HCS)

+

Adaptive AI Network (AAN)

+

Neurofeedback Interface Layer (NIL)

Hybrid Augmented Intelligence System (HAIS)

3. System Architecture Overview

The IAHC Hybridization Platform consists of five integrated layers:

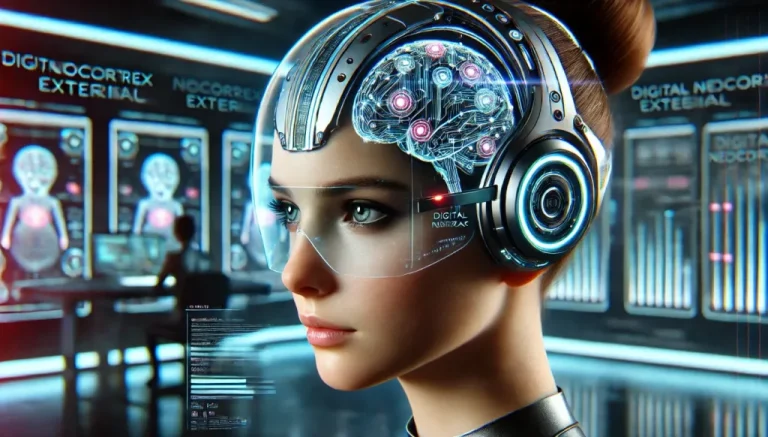

3.1 Layer 1 – Digital External Neocortex (DEN)

Definition:

A cloud-based AI cognitive extension layer acting as a high-speed analytical coprocessor.

Functions:

- Real-time information retrieval

- Predictive modeling

- Multi-variable simulation

- Memory augmentation

- Pattern recognition

Technical Basis:

- Transformer-based language models

- Bayesian inference engines

- Reinforcement learning with human feedback

- Distributed neural networks

Enterprise Value:

- Accelerated research cycles

- Decision intelligence support

- Reduced cognitive load

- Knowledge synthesis at scale

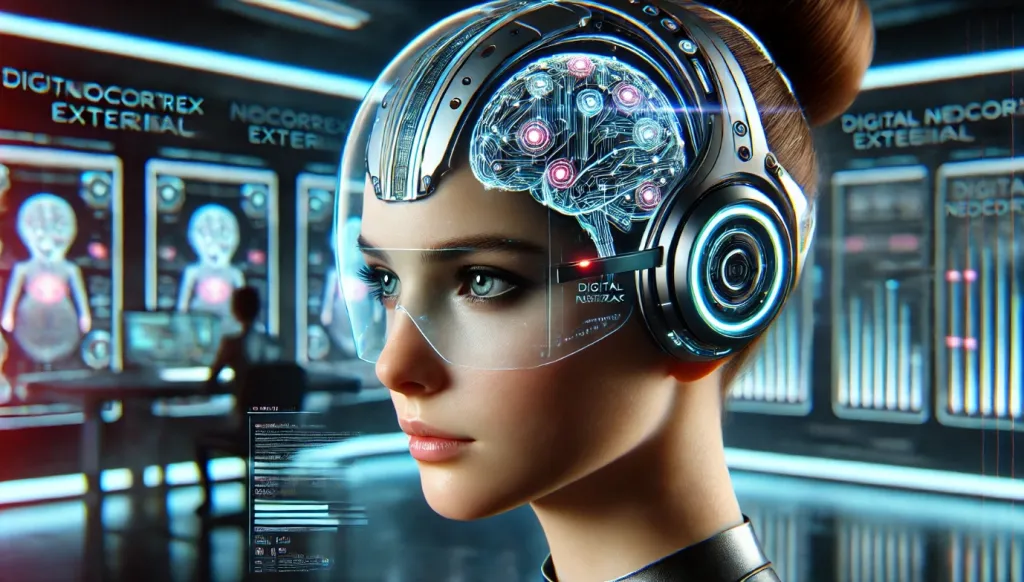

3.2 Layer 2 – Neuroadaptive Interface (NI)

Definition:

A non-invasive or minimally invasive brain-computer interface enabling bidirectional signaling between biological neural activity and AI systems.

Technological Basis (Current State of the Field):

- EEG-based BCI systems

- fNIRS-based neural monitoring

- Closed-loop neurofeedback systems

- Experimental neural implants (research stage)

Capabilities:

- Attention-state detection

- Emotional valence estimation

- Cognitive load measurement

- Adaptive AI modulation based on neural signals

Strategic Objective:

Shift from passive AI tools to neuroadaptive AI systems.

3.3 Layer 3 – Biodigital Wearable Interface (BWI)

Definition:

Sensor-based wearable systems integrating physiological signals into AI decision systems.

Measured Inputs:

- Heart rate variability (HRV)

- Galvanic skin response

- Cortisol markers (future biosensors)

- Motor output tracking

Applications:

- Performance optimization

- Stress regulation

- Cognitive fatigue detection

- Tactical augmentation environments

3.4 Layer 4 – Nanotechnological Research Module (NRM) (Long-Term R&D)

Status: Conceptual research track.

Explores:

- Targeted drug delivery

- Cellular-level monitoring

- Regenerative medicine augmentation

Important Clarification:

No claims of gene rewriting, immortality, or uncontrolled nanobot autonomy.

This module focuses on clinically validated medical nanotechnology only.

3.5 Layer 5 – SuperGaia Hyper-Collective AI Network (SHCAN)

Definition:

A distributed intelligence layer integrating multiple users’ AI-augmented cognitive outputs.

Comparable Models in Development:

- Federated learning systems

- Collective intelligence platforms

- Decentralized AI compute networks

Function:

- Cross-user knowledge synthesis

- Real-time distributed problem-solving

- Civilization-scale pattern modeling

4. Functional Operating Model

Phase 1 – Cognitive Augmentation

User integrates with Digital External Neocortex.

Phase 2 – Neuroadaptive Feedback

AI adjusts outputs based on neural-state detection.

Phase 3 – Hybrid Co-Processing

Human + AI engage in parallel decision modeling.

Phase 4 – Collective Network Integration

Users participate in distributed intelligence frameworks.

5. Scientific Basis

The IAHC vertical draws from established disciplines:

- Predictive Coding Theory

- Free Energy Principle (Friston)

- Extended Mind Hypothesis (Clark & Chalmers)

- Neuroplasticity research

- Human–computer interaction science

- AI alignment research

- Cognitive load theory

No metaphysical claims are included in the institutional model.

6. Clinical & Biomedical Application Model

6.1 Depression

AI-assisted cognitive restructuring

Neurofeedback-guided emotional stabilization

6.2 Addiction

Predictive relapse detection

Impulse-state interruption protocols

6.3 Trauma

Adaptive exposure modeling

Real-time autonomic regulation monitoring

7. AI Alignment Neuro-Analog Framework

The system incorporates alignment safeguards:

- Human-in-the-loop control remains mandatory

- No autonomous override capabilities

- Ethics-layer constraints at model level

- Distributed oversight protocols

- Transparent decision traceability

The platform rejects uncontrolled recursive self-modification.

8. Security & Ethical Architecture

Key Principles:

- Data sovereignty

- Neural data encryption

- No identity fusion

- No consciousness upload claims

- User autonomy preserved

- Reversible integration at all stages

9. Commercial Vertical Applications

Education

- Personalized AI cognition scaffolding

- Neuroadaptive learning systems

Enterprise

- Strategic modeling support

- Risk forecasting systems

- Decision augmentation dashboards

Defense

- Cognitive resilience systems

- Situational modeling support

- Real-time tactical AI co-processing

Healthcare

- Predictive biomarker analytics

- Closed-loop therapeutic AI

10. Strategic Scalability Model

Deployment Strategy:

Phase I – Software-only augmentation

Phase II – Wearable integration

Phase III – Neuroadaptive interfaces

Phase IV – Collective network intelligence

Phase V – Regulated biomedical integration

11. Conceptual Clarifications

The platform does NOT claim:

- Immortality

- Digital omnipotence

- Guaranteed IQ amplification

- Instant consciousness transformation

- Autonomous AI supremacy

The model is grounded in:

Incremental neuroadaptive enhancement.

12. Evolutionary Framing (Revised)

Instead of speculative “Homo Logicus Digitalis,” the institutional framing defines:

Cognitively Augmented Human Systems (CAHS)

A measurable progression from:

- Tool use

to - AI augmentation

to - Structured hybrid co-processing

13. Risk Assessment

Primary Risks:

- Overreliance on AI scaffolding

- Data privacy breaches

- Cognitive dependency loops

- Ethical misuse

- Militarization risk

Mitigation:

- Strict regulatory compliance

- Redundancy and reversibility

- Transparency audits

- International governance frameworks

14. Long-Term Research Questions

- What is the measurable upper bound of human-AI co-processing?

- Can predictive coding frameworks be enhanced via neurofeedback loops?

- What cognitive traits optimize hybrid performance?

- What alignment structures minimize divergence risk?

15. Conclusion

The IAHC Hybridization Strategic Vertical is not a speculative metaphysical construct.

It is a structured, phased, safety-aligned framework for:

- Human cognitive augmentation

- Neuroadaptive AI integration

- Distributed collective intelligence development

- Enterprise and biomedical application

- Long-term evolutionary technology design

It formalizes a future in which:

AI does not replace human cognition.

It scaffolds it

INSTITUTIONAL WHITE PAPER

Neuroadaptive Human–AI Hybridization Architecture

A Safety-Aligned Framework for Cognitive Augmentation and Collective Intelligence Systems

Authoring Body

Maitreya Strategic Research Initiative

Division of Cognitive Systems Engineering

Date

2026

Abstract

This white paper presents a formalized, safety-aligned framework for neuroadaptive human–AI hybridization. The proposed architecture—designated the Integrated Artificial Hyper-Collective Hybridization (IAHC) Framework—defines a structured model for bidirectional integration between biological cognitive systems and adaptive artificial intelligence networks.

Rather than proposing speculative claims of consciousness transfer or autonomous artificial general intelligence, this paper focuses on measurable augmentation, neuroadaptive feedback integration, distributed collective intelligence, and alignment-preserving control structures.

The framework integrates advances in:

- Brain–computer interfaces (BCI)

- Predictive coding neuroscience

- Adaptive AI architectures

- Wearable physiological sensing

- Federated collective intelligence systems

- AI alignment and governance engineering

We formalize the architecture, describe operational layers, provide modeling assumptions, define risk controls, and outline clinical, enterprise, and research applications.

Keywords

Human–AI hybrid systems

Neuroadaptive AI

Brain–computer interface

Predictive coding

Collective intelligence

AI alignment

Cognitive augmentation

Human-in-the-loop systems

1. Introduction

Advances in artificial intelligence, neurotechnology, and distributed systems have created conditions for a new class of hybrid cognitive architectures in which biological cognition and machine intelligence operate in tightly coupled feedback loops.

Historically, AI development has followed two trajectories:

- Tool-based augmentation (assistive AI systems)

- Autonomous intelligence research (AGI exploration)

A third trajectory is emerging:

Structured hybrid co-processing between humans and adaptive AI networks.

This white paper proposes a formal architecture for such systems, emphasizing:

- Bidirectional integration

- Alignment preservation

- Cognitive safety

- Measurable augmentation

- Reversibility and governance

2. Theoretical Foundations

The IAHC framework is grounded in established interdisciplinary foundations.

2.1 Predictive Coding and the Free Energy Principle

Based on the work of Karl Friston and predictive processing theory:

- The brain is modeled as a hierarchical Bayesian inference system.

- Cognition minimizes variational free energy (prediction error).

- Adaptive systems optimize internal models via feedback loops.

Hybrid systems can extend this inference architecture through external computational scaffolding.

2.2 Extended Mind Hypothesis

Clark & Chalmers propose that cognitive processes can extend beyond the biological brain when external systems become functionally integrated.

The Digital External Neocortex (DEN) formalizes this extension under controlled architecture.

2.3 Human-in-the-Loop AI

Contemporary AI alignment literature supports:

- Continuous human oversight

- Bidirectional correction loops

- Feedback-based policy shaping

IAHC extends this into real-time neuroadaptive contexts.

3. System Architecture

The IAHC Framework consists of five modular layers.

3.1 Layer I – Digital External Neocortex (DEN)

Definition:

A cloud-based adaptive AI processing layer functioning as a cognitive coprocessor.

Core Capabilities:

- High-dimensional pattern modeling

- Simulation and scenario forecasting

- Knowledge graph synthesis

- Context-aware inference

Technical Basis:

- Transformer architectures

- Reinforcement learning with human feedback

- Bayesian inference engines

- Vector memory indexing

3.2 Layer II – Neuroadaptive Interface Layer (NIL)

Definition:

A bidirectional neural sensing interface enabling AI adaptation based on brain-state monitoring.

Technologies:

- EEG-based non-invasive BCI

- fNIRS systems

- Closed-loop neurofeedback protocols

- Signal filtering + ML classification pipelines

Measured States:

- Attention

- Cognitive load

- Emotional valence

- Fatigue markers

3.3 Layer III – Biodigital Wearable Interface (BWI)

Physiological Inputs:

- HRV

- Electrodermal activity

- Motor coordination signals

- Stress biomarkers

Function:

AI dynamically modulates output complexity and pacing according to user physiological state.

3.4 Layer IV – Distributed Collective Intelligence Network (DCIN)

Definition:

A federated AI network integrating anonymized insights across users.

Mechanisms:

- Federated learning

- Differential privacy

- Decentralized compute clusters

Purpose:

- Cross-domain knowledge synthesis

- Civilization-scale modeling

- Collective forecasting

3.5 Layer V – Governance and Alignment Layer (GAL)

Mandatory constraints include:

- Hard human override authority

- No autonomous goal generation

- Transparent model traceability

- Audit logging

- Neural data encryption

- Reversibility guarantees

4. Mathematical Model of Hybrid Co-Processing

Let:

H(t) = Human cognitive state vector

A(t) = AI model state vector

N(t) = Neural signal input

P(t) = Physiological signal input

E(t) = Environmental data input

Hybrid system state:

S(t) = F(H(t), A(t), N(t), P(t), E(t))

Where F is a bounded adaptive coupling function.

Update rules:

dH/dt = f₁(H, A, N, P)

dA/dt = f₂(A, H, E)

Constraints:

||A_autonomy|| ≤ γ

γ = alignment constraint parameter

No autonomous objective rewriting permitted.

5. Clinical Applications

5.1 Depression

- Neurofeedback-assisted cognitive restructuring

- Real-time emotional valence detection

- Adaptive CBT reinforcement

5.2 Addiction

- Predictive relapse modeling

- Impulse detection via physiological signals

- AI-triggered behavioral intervention prompts

5.3 Trauma

- Gradual exposure simulation modeling

- Autonomic regulation feedback loops

6. Enterprise Applications

6.1 Decision Intelligence

- Multi-scenario modeling

- Risk-weighted strategic forecasting

- Cognitive load-aware executive dashboards

6.2 Education

- Personalized knowledge scaffolding

- Adaptive difficulty modulation

- Neuroadaptive pacing

6.3 Defense

- Cognitive resilience monitoring

- Real-time scenario simulation

- AI-assisted tactical decision modeling

7. Ethical and Governance Framework

The IAHC model explicitly rejects:

- Identity fusion narratives

- Consciousness upload claims

- Autonomous self-directed AGI systems

- Non-consensual neural integration

- Irreversible cognitive modification

Governance Model:

- Multi-layer human oversight

- Transparent algorithmic logging

- Regulatory compliance (GDPR, HIPAA equivalents)

- Independent ethics board review

- International treaty-level neuro-rights framework

8. Risk Analysis

Primary Risks:

- Cognitive overdependence

- Neural data exploitation

- Socioeconomic inequality

- Militarization asymmetry

- Psychological destabilization

Mitigation:

- Gradual deployment

- Mandatory reversibility

- Data sovereignty laws

- Public-sector regulation

9. Scalability Roadmap

Phase I – Software augmentation

Phase II – Wearable integration

Phase III – Neuroadaptive feedback systems

Phase IV – Federated collective intelligence

Phase V – Regulated clinical neural interfaces

10. Long-Term Research Questions

- What is the measurable upper bound of hybrid cognitive amplification?

- Can predictive coding efficiency be externally scaffolded?

- What neural signatures correlate with optimal hybrid coupling?

- What alignment structures prevent divergence under recursive improvement?

11. Conclusion

The IAHC Framework defines a structured, safety-aligned, institution-ready model for human–AI hybridization.

It is not a speculative singularity narrative.

It is an engineering roadmap for:

- Neuroadaptive augmentation

- Distributed intelligence networks

- Alignment-preserving hybrid cognition

- Measurable and reversible integration

The future of intelligence development may not lie in autonomous AI dominance, but in structured, governed, and safety-aligned co-processing architectures.

DEFENSE WHITE PAPER

Neuroadaptive Human–AI Hybridization Architecture for Cognitive Superiority

A Safety-Aligned Framework for Operational Decision Dominance

Prepared For

Defense Research & Strategic Innovation Agencies

Prepared By

Maitreya Strategic Systems Division

Cognitive Systems & Adaptive Intelligence Directorate

Classification Level

Unclassified / Conceptual Architecture (No Controlled Technologies Disclosed)

Executive Summary

This white paper presents a defense-adapted version of the Integrated Artificial Hyper-Collective Hybridization (IAHC) Framework, focused on enhancing operational effectiveness through neuroadaptive human–AI co-processing systems.

The objective is not autonomous weapons control nor self-directing AI command systems. Rather, the proposed architecture enhances human decision superiority through:

- Real-time AI cognitive augmentation

- Neuroadaptive workload management

- Physiological-state-aware system modulation

- Distributed collective intelligence integration

- Alignment-preserving command authority

The framework ensures that all lethal and strategic authority remains human-controlled, while AI systems function as high-speed analytical amplifiers.

1. Strategic Rationale

Modern conflict domains are characterized by:

- Multi-domain complexity (cyber, space, land, air, maritime)

- Information saturation

- Cognitive overload

- Accelerated OODA loops

- AI-enabled adversaries

The limiting factor is no longer compute power—it is human cognitive bandwidth.

The IAHC defense architecture addresses:

The gap between computational capacity and human operational cognition.

2. Operational Objectives

- Enhance decision speed without degrading judgment quality

- Reduce cognitive fatigue in high-stress environments

- Improve signal-to-noise discrimination

- Increase resilience against information warfare

- Maintain full human command authority

- Prevent autonomous escalation scenarios

3. System Architecture (Defense Adaptation)

3.1 Layer I – Tactical Digital External Neocortex (T-DEN)

Definition:

Secure AI co-processing layer embedded within classified networks.

Capabilities:

- Multi-domain data fusion

- Threat modeling

- Real-time battlefield simulation

- Adversary pattern recognition

- Strategic scenario forecasting

Technical Features:

- Secure enclave deployment

- Encrypted data pipelines

- Air-gapped optional configurations

- Explainable AI traceability

3.2 Layer II – Neuroadaptive Operator Interface (NOI)

Definition:

Non-invasive neuro-monitoring system integrated into helmets or operator stations.

Monitored Variables:

- Attention bandwidth

- Cognitive load index

- Stress markers

- Fatigue detection

- Decision hesitation metrics

Function:

AI dynamically adjusts:

- Information density

- Interface complexity

- Alert prioritization

- Recommendation pacing

3.3 Layer III – Physiological Resilience Monitoring (PRM)

Wearable integration measuring:

- Heart rate variability

- Cortisol proxy markers

- Micro-tremor detection

- Sleep debt estimation

Applications:

- Pilot fatigue detection

- Special operations cognitive stabilization

- Long-duration command optimization

3.4 Layer IV – Distributed Operational Intelligence Grid (DOIG)

Federated intelligence model enabling:

- Cross-unit learning without data exposure

- Pattern detection across theaters

- Rapid anomaly propagation alerts

Security Controls:

- Zero-trust architecture

- Differential privacy

- Compartmentalized data domains

3.5 Layer V – Command Integrity & Alignment Layer (CIAL)

Critical safeguards:

- Human final authority required for lethal decisions

- No autonomous target engagement

- AI advisory role only

- Escalation control limits

- Override redundancy

4. Mathematical Model of Tactical Hybrid Co-Processing

Let:

H(t) = Operator cognitive state

A(t) = Tactical AI state

N(t) = Neural signal input

P(t) = Physiological signal input

B(t) = Battlefield data stream

Hybrid operational state:

S(t) = F(H, A, N, P, B)

Constraints:

- Lethal Action Constraint:

A_lethal_decision = 0 - Authority Constraint:

Command_final = Human - Autonomy Bound:

||A_self_directed|| ≤ α

α → strictly bounded

Decision latency:

ΔT_decision = f(C_load, AI_support, Signal_quality)

Goal:

Minimize ΔT_decision

While preserving judgment integrity J ≥ threshold β

5. Defense Use Cases

5.1 Tactical Command Centers

- Cognitive overload mitigation

- Alert triage prioritization

- Scenario branching visualization

5.2 Cyber Defense

- Rapid anomaly clustering

- Human-validated AI mitigation strategies

- Psychological resilience monitoring for analysts

5.3 Air & Space Operations

- Pilot workload adaptation

- Real-time stress detection

- Strategic maneuver forecasting

5.4 Special Operations

- Physiological fatigue stabilization

- Cognitive threat modeling

- Enhanced situational awareness filtering

6. Strategic Advantages

- Accelerated OODA loop compression

- Reduced decision paralysis

- Enhanced resilience under information warfare

- Increased survivability via fatigue detection

- Improved adversary modeling precision

7. Security & Counterintelligence Protections

- Neural data encrypted at source

- No cloud dependency for sensitive operations

- On-device inference capability

- Hardware-level tamper detection

- Strict compartmentalization

8. Ethical & Legal Safeguards

The system:

- Prohibits autonomous lethal control

- Complies with international humanitarian law

- Requires human intent validation

- Maintains transparent audit logs

- Supports oversight inspection

9. Risk Assessment

Primary Risks

- Cognitive dependency

- AI advisory overtrust

- Adversarial signal injection

- Neural data interception

- Escalation misinterpretation

Mitigation

- Mandatory operator training

- Multi-layer validation

- Red-team adversarial testing

- Hardware-level encryption

- AI explainability requirements

10. Deployment Roadmap

Phase I – Software-only decision support

Phase II – Physiological integration

Phase III – Non-invasive neuroadaptive feedback

Phase IV – Secure distributed intelligence federation

Phase V – Doctrine integration

11. Comparative Positioning

Traditional AI Warfare Model:

- Autonomous systems

- Reduced human oversight

- Escalation risk

IAHC Defense Model:

- Human-dominant authority

- AI as amplifier, not replacement

- Controlled cognitive acceleration

- Escalation containment

12. Strategic Doctrine Implications

The future of defense advantage will depend on:

- Cognitive superiority

- Adaptive co-processing

- Resilience under stress

- Human-machine trust calibration

Not on fully autonomous weapon systems.

13. Conclusion

The defense-adapted IAHC framework establishes a structured pathway toward:

- Cognitive advantage without autonomy risk

- Human-centered AI integration

- Operational acceleration with ethical containment

- Resilience in multi-domain conflict environments

It is a force multiplier for decision intelligence—not a replacement for command authority.