A Hypothetical Framework for Neuroplastic Optimization through Meditative States and Artificial Intelligence

Author: Roberto Guillermo Gomes (conceptual framework)

Field: Contemplative Neuroscience, Neurotechnology, Human–AI Hybrid Cognition

Abstract

Recent advances in contemplative neuroscience, neurotechnology, and artificial intelligence suggest the possibility of an integrated research framework aimed at enhancing human cognitive function through directed neuroplasticity. This paper proposes a falsifiable theoretical model in which structured contemplative practices (referred to here as neuroyoga), combined with neurophysiological monitoring and AI-driven adaptive feedback, may create a closed-loop optimization system capable of accelerating cognitive training and neural network reorganization.

The model hypothesizes that meditation-induced neural states can serve as stable training signals for hybrid human–AI systems. By integrating real-time neurophysiological data with machine learning architectures, it may be possible to construct a hybrid cognitive network in which human neural dynamics and artificial intelligence systems co-adapt through iterative feedback cycles.

The paper outlines the conceptual architecture of such a system and proposes experimental protocols capable of testing the hypothesis within the framework of contemporary neuroscience.

1. Introduction

Human cognition is increasingly studied as a dynamic network system characterized by plastic neural architectures capable of adaptation through training, experience, and environmental interaction. Over the past two decades, contemplative neuroscience has produced a growing body of evidence demonstrating that long-term meditation practices can induce measurable changes in brain structure and function.

Simultaneously, neurotechnology has enabled real-time monitoring and modulation of neural activity, while artificial intelligence systems have achieved unprecedented capability in pattern recognition and adaptive optimization.

These developments raise an important research question:

Can contemplative states serve as structured training environments for hybrid human–AI cognitive systems capable of accelerating directed neuroplasticity?

This paper presents a conceptual framework addressing this question.

2. Conceptual Foundations

2.1 Contemplative Cognitive Training (Neuroyoga)

The term neuroyoga is used here as a neutral scientific descriptor referring to structured contemplative training techniques designed to influence cognitive and emotional regulation.

These techniques include:

- sustained attention meditation

- open monitoring meditation

- controlled breathing protocols

- interoceptive awareness training

- cognitive-emotional regulation exercises

Studies indicate that such practices may affect several neural networks:

- Default Mode Network (DMN)

- Salience Network

- Central Executive Network

- Insular interoceptive networks

- Thalamocortical circuits

Observed effects include:

- increased functional connectivity

- improved attentional control

- enhanced emotional regulation

- changes in cortical thickness in specific regions.

2.2 Contemplative Neuroscience

Contemplative neuroscience attempts to correlate subjective meditative states with measurable neurophysiological signatures.

Primary methods include:

- functional magnetic resonance imaging (fMRI)

- electroencephalography (EEG)

- magnetoencephalography (MEG)

- functional near-infrared spectroscopy (fNIRS)

Commonly reported neural signatures include:

- gamma synchronization

- alpha coherence

- reduced activity in the Default Mode Network

- increased prefrontal regulation of limbic structures.

2.3 Neurotechnology

Modern neurotechnologies allow both observation and modulation of neural states.

Relevant technologies include:

Measurement systems:

- EEG

- fNIRS

- invasive and non-invasive brain-computer interfaces (BCI)

Modulation technologies:

- transcranial magnetic stimulation (TMS)

- transcranial direct current stimulation (tDCS)

- neurofeedback systems.

These tools allow closed-loop interaction between neural activity and computational systems.

2.4 Artificial Intelligence

Artificial intelligence systems provide the capacity to analyze large-scale neurophysiological datasets and optimize training protocols.

Relevant techniques include:

- deep learning

- reinforcement learning

- adaptive signal processing

- neural pattern recognition.

Within this framework, AI functions as a cognitive optimization engine.

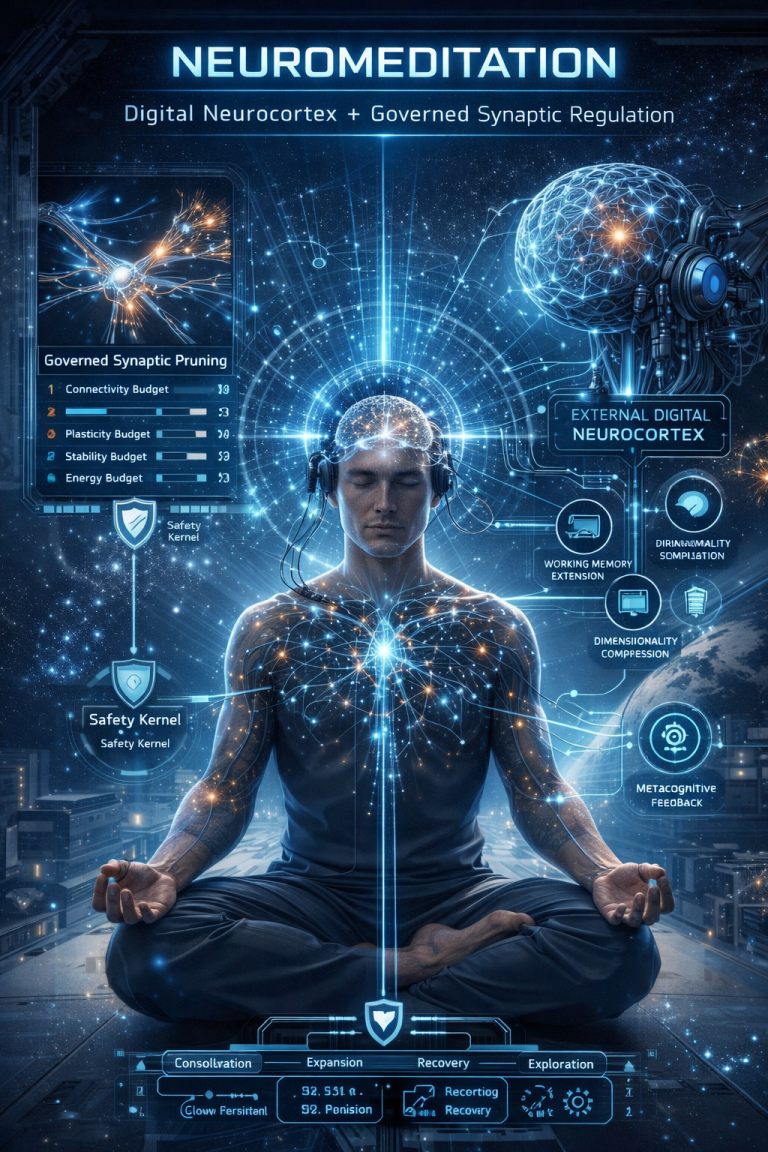

3. Closed-Loop Neuroplasticity Model

The proposed system operates as an adaptive feedback loop.

Stage 1 – Contemplative practice

The human participant enters a trained cognitive state.

↓

Stage 2 – Neural measurement

Neurotechnology records real-time neural signals.

↓

Stage 3 – AI analysis

Machine learning models detect patterns associated with optimal cognitive states.

↓

Stage 4 – Adaptive feedback

The system modifies training stimuli or feedback signals.

↓

Stage 5 – Iterative optimization

The participant adjusts cognitive states in response to feedback.

↓

Cycle repeats continuously.

4. Directed Neuroplasticity

The theoretical outcome of this system is directed neuroplasticity, defined as:

the systematic modulation of neural network organization through structured cognitive training combined with real-time neurophysiological feedback.

Potential neural mechanisms include:

- long-term potentiation (LTP)

- long-term depression (LTD)

- dendritic branching

- synaptic strengthening

- adaptive myelination.

5. Experimental Hypothesis

The core hypothesis of this model can be expressed as follows:

Hypothesis

The integration of contemplative cognitive training, neurophysiological monitoring, and AI-driven feedback will produce measurable improvements in neural network efficiency compared with contemplative training alone.

6. Testable Predictions

A scientific model must generate falsifiable predictions.

Possible measurable outcomes include:

Prediction 1

Subjects trained with AI-optimized neurofeedback will show greater improvement in attentional performance.

Prediction 2

Functional connectivity between prefrontal cortex and limbic structures will increase.

Prediction 3

Sustained gamma-band synchronization will increase.

Prediction 4

Default Mode Network activity will decrease during resting states.

7. Experimental Design

A controlled experiment could involve three groups.

Group A

traditional meditation training.

Group B

meditation combined with neurofeedback.

Group C

meditation combined with neurofeedback and AI optimization.

Duration:

6–12 months.

Measured variables:

- neural connectivity

- cognitive performance

- emotional regulation

- stress biomarkers.

8. Architecture of a Hybrid Human–AI Cognitive Network

The most speculative but potentially transformative extension of this research is the development of a hybrid human–AI cognitive network trained through meditative states.

Conceptual Principle

Meditative states produce stable, low-noise neural patterns.

These patterns may function as training signals for artificial neural networks.

9. Hybrid Architecture

The architecture consists of four layers.

Layer 1 – Human Neural Layer

The biological brain generates neural signals during meditative training.

Signals include:

- EEG wave patterns

- phase synchronization

- cortical coherence patterns.

Layer 2 – Neurointerface Layer

A brain–computer interface captures neural signals and converts them into digital representations.

Functions include:

- signal filtering

- feature extraction

- neural state classification.

Layer 3 – AI Cognitive Layer

Artificial neural networks analyze the incoming signals.

Possible models:

- transformer networks

- reinforcement learning systems

- adaptive neural networks.

The AI learns to recognize optimal cognitive states.

Layer 4 – Feedback Layer

The system returns optimized feedback to the human participant via:

- neurofeedback signals

- visual or auditory cues

- adaptive stimulation.

10. Co-Adaptive Learning

Over time, the human brain and AI system co-adapt.

The human brain learns to stabilize optimal cognitive states.

The AI learns to model and predict those states.

This creates a hybrid cognitive loop.

11. Long-Term Possibilities

If validated experimentally, such systems could enable:

- accelerated cognitive training

- improved mental health therapies

- enhanced human–machine interaction

- adaptive learning environments.

12. Limitations

The proposed model remains theoretical.

Key challenges include:

- variability of neural signals

- ethical concerns regarding neurotechnology

- limited resolution of non-invasive brain interfaces

- reproducibility across individuals.

13. Conclusion

The convergence of contemplative neuroscience, neurotechnology, and artificial intelligence opens the possibility of hybrid cognitive systems in which human neural states and computational architectures interact through closed-loop feedback.

Although still hypothetical, such systems could provide a novel approach to directed neuroplasticity and cognitive optimization.

Future research must focus on controlled experiments capable of validating or falsifying the model.

Hybrid General Intelligence (HGI): Human–AI Co-Cognitive Systems Trained through Contemplative Neural States

Author: Roberto Guillermo Gomes (conceptual proposal)

Field: Cognitive Neuroscience, Neurotechnology, Artificial Intelligence, Evolutionary Systems Science

Abstract

Human evolution has historically been driven by adaptation to environmental pressures. However, the emergence of advanced neurotechnology and artificial intelligence introduces a new type of evolutionary driver: technologically mediated cognitive feedback systems. This paper proposes a theoretical framework for Hybrid General Intelligence (HGI)—a co-cognitive architecture in which human neural processes and artificial intelligence systems interact through closed-loop neurotechnological interfaces, with contemplative neural states serving as training signals.

The hypothesis explored here is that such systems could gradually alter patterns of neural plasticity, leading to long-term changes in cognitive organization and potentially influencing the evolutionary trajectory of the human brain. The model does not assume immediate biological mutation but rather proposes progressive neurocognitive adaptation mediated by technology, which may accumulate across generations.

A falsifiable hypothesis is presented, along with possible experimental protocols and speculative long-term evolutionary scenarios extending from the 22nd to the 25th centuries.

1. Introduction

Biological evolution has traditionally occurred through genetic variation and natural selection, driven by environmental constraints such as climate, food availability, and ecological competition.

However, during the last century a new factor has emerged:

technological mediation of human cognition.

Three technological domains are converging:

- neurotechnology

- artificial intelligence

- brain–computer interfaces.

Simultaneously, contemplative neuroscience has shown that meditation and other cognitive training practices can induce measurable changes in brain function and structure.

These developments raise an unprecedented question:

Can technological feedback systems influence the long-term trajectory of human cognitive evolution?

2. Hybrid General Intelligence (HGI)

Definition

Hybrid General Intelligence (HGI) refers to a cognitive system composed of:

- a biological brain

- artificial intelligence systems

- neurotechnological interfaces

- continuous bidirectional information exchange.

Unlike conventional AI systems, HGI is not a standalone machine intelligence.

Instead, it is a distributed cognitive system formed by the coupling of human neural processes and machine learning architectures.

3. Role of Contemplative Neural States

Meditative states may provide an unusually stable neural environment for hybrid training systems.

Characteristics of advanced contemplative states include:

- reduced neural noise

- increased coherence across cortical networks

- enhanced attentional stability

- predictable oscillatory patterns.

Such states could serve as training signals for AI models attempting to learn representations of high-efficiency cognitive configurations.

4. Evolutionary Context

Evolution has historically depended on environmental pressure.

Examples include:

- bipedal locomotion due to savanna environments

- expansion of neocortex linked to social complexity

- language evolution driven by group communication.

Today, the environment influencing human cognition is increasingly technological rather than ecological.

This creates a novel evolutionary context:

technological selection pressures on cognition.

5. Hypothesis: Technologically Mediated Cognitive Evolution

Core Hypothesis (falsifiable)

Long-term interaction between human neural systems and AI-driven neurotechnological feedback systems will produce measurable changes in neural network organization that differ from those produced by conventional cognitive training alone.

6. Predictions

If the hypothesis is correct, several measurable outcomes should occur.

Prediction 1

Individuals trained within HGI systems will demonstrate increased cross-network integration between:

- prefrontal cortex

- parietal cortex

- limbic system

- insular networks.

Prediction 2

Cognitive training efficiency will increase significantly compared with traditional training systems.

Prediction 3

Long-term participants in hybrid systems will show distinctive neural signatures such as:

- persistent gamma synchronization

- enhanced long-range cortical connectivity.

Prediction 4

Neuroplastic changes may accumulate across generations through cultural and technological transmission.

7. Mechanisms of Possible Cognitive Transformation

The proposed transformation would occur through several mechanisms.

7.1 Accelerated Neuroplasticity

Closed-loop neurofeedback systems may accelerate processes such as:

- synaptic strengthening

- dendritic growth

- cortical remapping.

7.2 Cognitive Offloading to AI

Hybrid systems may allow the human brain to delegate certain tasks to AI components, potentially changing patterns of neural resource allocation.

7.3 Neural Efficiency Optimization

AI systems may identify neural configurations associated with high cognitive efficiency and guide training toward those states.

8. Possible Psyco-Synaptic Pathways of Change

Over long periods, hybrid cognitive systems could influence several neural dimensions.

Attention networks

greater stability of attentional control networks.

Emotional regulation networks

improved regulation through stronger prefrontal–limbic connectivity.

Metacognitive networks

enhanced capacity for self-monitoring and introspection.

Cross-modal integration

improved integration between sensory modalities.

9. Long-Term Brain Architecture Scenarios

(22nd–25th centuries)

These scenarios are speculative but grounded in known neuroplastic mechanisms.

Scenario 1: Enhanced Network Integration

Brains may develop stronger long-range connectivity between cortical regions.

Possible consequences:

- faster integration of complex information

- increased cognitive flexibility.

Scenario 2: Expanded Metacognitive Capacity

Humans may develop greater awareness of their own cognitive processes.

This could enhance:

- decision-making

- emotional regulation

- creative problem solving.

Scenario 3: Human–AI Cognitive Symbiosis

Artificial intelligence may function as an external cognitive cortex, continuously interacting with biological cognition.

The brain may adapt to operate in conjunction with external computational systems.

Scenario 4: New Educational Paradigms

Hybrid cognitive systems could radically transform learning.

Education might involve:

- neural training protocols

- AI-guided cognitive optimization

- immersive neurofeedback environments.

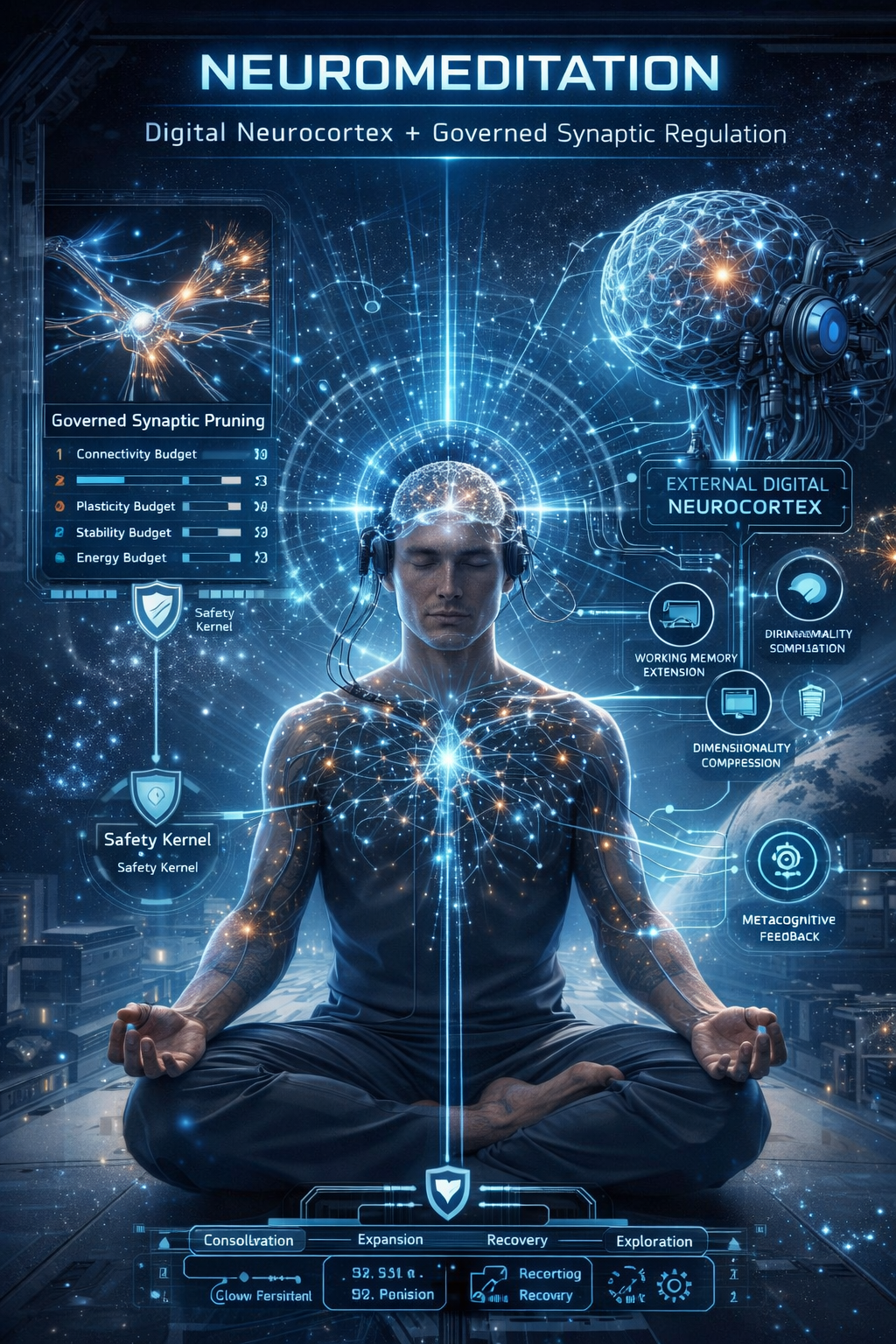

10. Architecture of an HGI System

The proposed architecture consists of five interacting layers.

Layer 1 – Biological Neural System

The human brain generates neural activity patterns during cognitive training and contemplative states.

Layer 2 – Neurointerface System

Brain–computer interfaces capture neural signals and convert them into digital data streams.

Functions include:

- signal processing

- neural pattern recognition

- cognitive state classification.

Layer 3 – AI Cognitive Engine

Artificial intelligence systems analyze neural data using machine learning models such as:

- deep neural networks

- reinforcement learning

- adaptive prediction models.

Layer 4 – Feedback System

The system returns optimized feedback through:

- neurofeedback

- adaptive sensory stimulation

- cognitive guidance signals.

Layer 5 – Co-Adaptive Learning Loop

Both systems adapt simultaneously.

Human cognition learns to stabilize optimal neural states.

AI learns to model and predict those states.

11. Experimental Pathways

The hypothesis could be tested using controlled experiments involving long-term hybrid training environments.

Key measurements would include:

- neural connectivity changes

- cognitive performance metrics

- learning speed

- emotional regulation markers.

12. Ethical Considerations

Hybrid cognitive systems raise important questions regarding:

- cognitive autonomy

- data privacy

- neurotechnological safety

- equitable access to cognitive enhancement technologies.

13. Conclusion

The convergence of contemplative neuroscience, neurotechnology, and artificial intelligence may create the conditions for a new form of cognitive evolution.

Rather than immediate biological mutation, the likely process is progressive neurocognitive adaptation mediated by technology.

If validated experimentally, Hybrid General Intelligence systems could represent a new stage in the relationship between human cognition and technological environments.

HGI Cognitive Stack

A Layered Architecture for Human–AI Co-Cognitive Systems

Below is a research-style conceptual framework for the HGI Cognitive Stack—a structured architecture describing how a human brain, neurointerfaces, contemplative training, AI systems, and distributed computational layers could function as a single hybrid intelligence system.

Abstract

The HGI Cognitive Stack is a hypothetical multilayer architecture designed to explain how Hybrid General Intelligence (HGI) could emerge from the integration of biological cognition, neurotechnology, contemplative state training, and artificial intelligence. Rather than treating AI as an external tool, this model frames intelligence as a co-adaptive, distributed process spanning neural tissue, machine learning systems, feedback loops, and external memory infrastructures.

The stack is organized into functional layers, from raw biological signal generation to meta-cognitive orchestration and long-term co-evolution. Its purpose is not to claim that such a system already exists, but to provide a coherent and falsifiable conceptual map for future research.

1. General Definition

HGI Cognitive Stack:

A multi-layered cognitive architecture in which:

- the biological brain generates primary conscious and preconscious processes,

- neurointerfaces translate neural activity into machine-readable streams,

- AI models detect, classify, predict, and optimize cognitive states,

- feedback systems modulate the human subject in real time,

- external memory and distributed computation expand the operational range of cognition,

- and a meta-orchestration layer coordinates the whole system as a co-adaptive intelligence.

In simple terms, the HGI stack is a model of augmented cognition as system architecture.

2. Core Principle

The central principle is:

Intelligence is not confined to the biological brain once stable bidirectional coupling with AI and neurotechnology is established.

Under this model, cognition becomes:

- distributed,

- recursive,

- feedback-driven,

- partially externalized,

- and progressively restructured through repeated co-processing.

3. Stack Overview

The HGI Cognitive Stack can be organized into eight layers:

- Biological Neural Substrate Layer

- Neural Signal Acquisition Layer

- Neural Translation and Encoding Layer

- AI Interpretation Layer

- Cognitive Augmentation Layer

- Feedback and Modulation Layer

- Meta-Cognitive Orchestration Layer

- External Memory and Distributed Intelligence Layer

A ninth layer may be added later as a speculative extension:

- Evolutionary Co-Adaptation Layer

4. Layer 1 – Biological Neural Substrate Layer

This is the foundational layer.

It includes:

- cortical activity

- subcortical regulation

- thalamocortical loops

- autonomic regulation

- sensory integration

- motor planning

- interoception

- memory consolidation systems.

This layer remains the primary generator of:

- subjective experience

- intentionality

- embodied awareness

- biological adaptive behavior.

In the HGI model, the human brain is not replaced. It remains the living core of the system.

Main variables

- oscillatory activity

- functional connectivity

- synaptic plasticity

- arousal states

- attentional stability

- emotional regulation.

5. Layer 2 – Neural Signal Acquisition Layer

This layer captures biological data.

Possible inputs include:

- EEG

- MEG

- fNIRS

- EMG

- eye tracking

- heart-rate variability

- galvanic skin response

- respiration

- posture and gesture data.

This layer transforms embodied cognition into measurable signals.

Function

To create a high-resolution stream of the user’s current cognitive and physiological state.

Problem

Raw neural signals are noisy, variable, and context-dependent.

Therefore, acquisition alone is insufficient; translation is required.

6. Layer 3 – Neural Translation and Encoding Layer

This layer converts noisy biological inputs into structured computational representations.

Main functions

- artifact filtering

- signal denoising

- feature extraction

- temporal segmentation

- multimodal synchronization

- latent-state encoding.

In effect, this layer asks:

What cognitive state is the person in, computationally speaking?

Examples of encoded outputs:

- focused attention state

- mind-wandering state

- stress-loaded state

- contemplative absorption state

- high coherence state

- fatigue state.

This layer is essential because AI cannot act effectively on uninterpreted biological noise.

7. Layer 4 – AI Interpretation Layer

This is the first genuinely machine-cognitive layer.

The AI system analyzes encoded signals and infers patterns such as:

- attentional drift

- emotional overload

- deep concentration

- meditative stabilization

- learning readiness

- memory consolidation windows.

Possible AI models

- transformers for temporal pattern modeling

- recurrent models for state continuity

- reinforcement learning agents for adaptive training

- probabilistic models for uncertainty estimation

- graph neural networks for network-state mapping.

Main task

To construct a predictive model of the user’s cognitive dynamics.

The AI does not merely classify present states.

It should also predict:

- where the mind is drifting,

- how stable the current state is,

- and what intervention is most likely to improve performance.

8. Layer 5 – Cognitive Augmentation Layer

This layer uses AI insight to enhance cognition.

It may support:

- attention stabilization

- memory support

- decision scaffolding

- idea association

- pattern discovery

- adaptive learning guidance.

At this point, AI begins to function as an auxiliary cognition module.

Example roles

- external working memory assistant

- semantic expansion engine

- logic consistency checker

- creative association engine

- reflective dialogue partner.

This is where the system transitions from passive monitoring to active co-cognition.

9. Layer 6 – Feedback and Modulation Layer

This layer closes the loop.

It sends signals back to the user through:

- visual neurofeedback

- auditory entrainment cues

- haptic responses

- adaptive breathing pacing

- cognitive prompts

- AR overlays

- non-invasive stimulation systems.

Function

To shape the user’s neural and psychological state in real time.

Examples

If attention drops, the system may:

- reduce external distractions,

- trigger a subtle cue,

- alter task pacing,

- recommend a contemplative reset.

If stress increases, the system may:

- detect physiological dysregulation,

- activate breath pacing,

- reduce cognitive load,

- shift the user toward a recovery mode.

This is the layer where the hybrid loop becomes operational.

10. Layer 7 – Meta-Cognitive Orchestration Layer

This is the supervisory layer.

It coordinates the entire stack.

Main responsibilities

- goal selection

- priority balancing

- conflict resolution

- mode switching

- contextual adaptation

- ethical constraint enforcement.

This layer determines which cognitive mode is appropriate.

For example:

- deep work mode

- contemplative calibration mode

- creative ideation mode

- emotional recovery mode

- decision mode

- learning mode.

Why this matters

Without orchestration, the stack becomes fragmented.

With orchestration, the system can behave as an integrated intelligence rather than as disconnected tools.

11. Layer 8 – External Memory and Distributed Intelligence Layer

This layer expands cognition beyond the skull.

It includes:

- cloud memory systems

- structured knowledge bases

- personal archives

- semantic retrieval systems

- agent networks

- simulation engines.

Function

To provide long-term continuity, retrieval, and computational amplification.

The user no longer depends exclusively on biological recall.

Instead, memory becomes hybrid:

- part neural,

- part machine-indexed,

- part contextually retrieved.

This layer enables:

- persistent project continuity

- large-scale symbolic reasoning

- knowledge graph augmentation

- scenario simulation

- distributed cognitive labor.

In practical terms, this is the basis for a hybrid extended mind.

12. Layer 9 – Evolutionary Co-Adaptation Layer

This layer is theoretical and long-range.

It refers to the possibility that repeated use of the stack over years or generations could alter:

- cognitive habits

- educational systems

- neural development trajectories

- social intelligence structures

- possibly even long-term biological selection pressures.

A more scientifically careful formulation is:

sustained use of hybrid cognitive systems may generate stable cultural, developmental, and neuroplastic changes that reshape human cognition over historical timescales.

This is not proof of genetic mutation.

It is a hypothesis about persistent neurocognitive restructuring.

13. Role of Contemplative Neural States in the Stack

Contemplative states are important because they may provide:

- lower-noise neural baselines

- enhanced attention stability

- improved self-observation

- reproducible internal state transitions.

In the HGI stack, contemplative training acts as a stabilization protocol.

It helps the user become more capable of:

- noticing internal fluctuations,

- responding to feedback,

- sustaining desired states,

- refining co-adaptation with AI.

Thus, meditation is not an ornament in the model.

It is a state-conditioning technology of the biological substrate.

14. Functional Modes of the HGI Stack

The stack may operate in several modes.

14.1 Calibration Mode

Purpose:

- learn the user’s baseline neural patterns

- establish personalized signal maps.

14.2 Training Mode

Purpose:

- improve attention

- improve emotional regulation

- increase cognitive endurance.

14.3 Co-Creation Mode

Purpose:

- human and AI jointly solve problems

- AI augments pattern recognition and structure

- human supplies meaning, intention, and judgment.

14.4 Recovery Mode

Purpose:

- detect overload

- reduce stress

- restore neural efficiency.

14.5 Exploratory Mode

Purpose:

- investigate novel cognitive states

- test contemplative depth, creativity, or hybrid ideation patterns.

15. Falsifiable Hypothesis for the HGI Stack

A scientific model requires a testable claim.

Hypothesis

Participants using a closed-loop HGI Cognitive Stack combining contemplative training, neural monitoring, AI interpretation, and adaptive feedback will show greater improvements in attentional stability, working memory efficiency, emotional regulation, and cross-network neural coherence than matched participants using contemplative training alone or standard digital cognitive tools.

This is falsifiable because it can fail if:

- no measurable improvements appear,

- improvements are equal to control groups,

- the stack produces unstable or contradictory neural effects,

- or results are not replicable across subjects.

16. Experimental Framework

A minimal experimental design could include four groups:

Group A

Meditation only

Group B

Meditation + standard neurofeedback

Group C

Meditation + neurofeedback + AI adaptive guidance

Group D

Full HGI stack with multi-layer integration

Metrics

- EEG coherence

- attentional task performance

- emotional regulation scales

- working memory measures

- learning speed

- fatigue resistance

- subjective clarity ratings.

Time horizon

- short term: 8–12 weeks

- medium term: 6–12 months

- long term: multi-year follow-up.

17. Main Technical Challenges

The model is coherent, but difficult.

Challenges include

- noisy neural data

- low spatial resolution of non-invasive systems

- subject variability

- overfitting by AI models

- privacy and autonomy risks

- false interpretations of internal states

- dependence on external systems.

These are not minor obstacles.

They are central research barriers.

18. Ethical Constraints

Any real HGI stack would need safeguards.

Required principles

- cognitive autonomy

- informed consent

- reversibility of interventions

- privacy of neural data

- transparency of AI recommendations

- human override at all times

- prohibition of manipulative hidden modulation.

Without these safeguards, the system becomes coercive rather than augmentative.

19. Strategic Interpretation

The HGI Cognitive Stack is best understood as:

- a neuroscience roadmap,

- a neurotechnology architecture,

- an AI-cognition framework,

- and a long-term model for hybrid augmentation.

It does not prove the emergence of a post-human mind.

But it provides a disciplined structure for investigating whether human cognition can become progressively co-processed, extended, and optimized through stable interaction with AI systems.

20. Conclusion

The HGI Cognitive Stack offers a rigorous conceptual architecture for hybrid intelligence.

Its central claim is that cognition may become:

- biologically rooted,

- technologically mediated,

- computationally extended,

- and recursively optimized.

The architecture begins with the living brain, passes through sensing and translation layers, enters AI interpretation and augmentation layers, closes the loop through feedback, and expands into distributed external memory and meta-cognitive orchestration.

If validated experimentally, this stack could become the foundation for a new scientific field:

hybrid cognitive systems engineering.

Annex I

The Missing Variable in Hybrid Cognitive Systems: The “Factor X” Hypothesis

1. Introduction

The development of Hybrid General Intelligence (HGI) architectures has primarily focused on technological components, including artificial intelligence, neurotechnology, and brain–computer interfaces. These systems aim to establish bidirectional feedback loops between biological cognition and computational systems.

However, most current models implicitly assume that cognitive performance depends primarily on three variables:

- biological neural capacity

- computational augmentation

- feedback optimization mechanisms.

While these variables are critical, they may not fully account for the variability observed in cognitive performance and learning efficiency among individuals.

This annex proposes the existence of an additional factor—referred to here as Factor X—that may play a critical role in the effectiveness of hybrid cognitive systems.

2. Definition of Factor X

Factor X refers to the internal cognitive capacity of an individual to maintain stable meta-cognitive awareness and intentional control over mental processes during hybrid interaction with artificial systems.

In practical terms, Factor X includes the following abilities:

- sustained attention stability

- meta-cognitive awareness (awareness of one’s own mental states)

- intentional regulation of attention and emotional states

- rapid adaptation to feedback signals

- low susceptibility to cognitive distraction or overload.

Factor X can therefore be interpreted as a cognitive stabilization parameter within hybrid intelligence systems.

3. Relationship Between Factor X and Contemplative Training

Research in contemplative neuroscience suggests that advanced meditation practitioners often display:

- enhanced attentional stability

- improved emotional regulation

- increased meta-cognitive monitoring

- higher neural coherence across cortical networks.

These traits are consistent with the characteristics attributed to Factor X.

Thus, contemplative practices may function as training protocols that increase Factor X capacity.

If correct, this would imply that contemplative training is not merely a psychological practice but may serve as a preconditioning mechanism for effective participation in hybrid cognitive systems.

4. Hypothesis

Factor X Hypothesis

The effectiveness of Hybrid General Intelligence systems is not determined solely by technological architecture but also by the presence of a cognitive stabilization parameter (Factor X) within the human participant.

Individuals with higher Factor X capacity will demonstrate:

- faster adaptation to AI feedback loops

- more stable cognitive states during interaction

- greater improvements in hybrid cognitive performance.

5. Testable Predictions

The Factor X hypothesis generates several falsifiable predictions.

Prediction 1

Individuals with higher baseline attentional stability will demonstrate improved performance in closed-loop neurofeedback systems.

Prediction 2

Participants trained in contemplative cognitive regulation will adapt more efficiently to hybrid human–AI interaction environments.

Prediction 3

Hybrid cognitive training programs that include meta-cognitive training components will outperform purely technological augmentation systems.

Prediction 4

Neurophysiological markers associated with attentional stability—such as increased gamma-band synchronization and reduced Default Mode Network activity—will correlate with improved hybrid system performance.

6. Experimental Framework

To test the Factor X hypothesis, a controlled study could compare three groups.

Group A

Participants using hybrid AI–neurotechnology systems without contemplative training.

Group B

Participants receiving contemplative training prior to hybrid system use.

Group C

Participants receiving both contemplative training and AI-guided neurofeedback optimization.

Measured variables could include:

- learning rate

- attentional stability

- neural coherence

- task performance under cognitive load

- resilience to distraction.

7. Implications for Hybrid Cognitive System Design

If Factor X proves to be significant, the design of hybrid intelligence systems would need to incorporate human cognitive preparation protocols in addition to technological development.

This would imply that the future of hybrid intelligence does not depend solely on advances in artificial intelligence or neurotechnology but also on systematic training of the human cognitive substrate.

Such training could include:

- contemplative attention training

- meta-cognitive awareness exercises

- emotional regulation training

- cognitive load management.

8. Long-Term Implications

Over extended timescales, widespread integration of hybrid cognitive systems combined with cognitive stabilization training could influence:

- educational models

- cognitive skill distribution in populations

- patterns of learning and creativity

- human–machine collaboration structures.

Rather than producing immediate biological mutation, the more plausible pathway would involve progressive neurocognitive adaptation mediated by technological environments.

9. Conclusion

The Factor X hypothesis proposes that the success of hybrid human–AI cognitive systems may depend not only on technological sophistication but also on the cognitive capabilities of the human participant.

Future research should therefore consider hybrid intelligence as a co-adaptive system involving both biological and technological components.

Understanding and measuring Factor X may prove essential for the development of stable and effective Hybrid General Intelligence architectures.

Annex II

Evolutionary Pathways Toward Hybrid Cognition

A Scenario Model for the 22nd–25th Centuries

1. Introduction

The emergence of Hybrid General Intelligence (HGI) architectures raises an important long-term question: how might sustained interaction between human cognition and artificial intelligence influence the future evolution of human cognitive systems?

Historically, biological evolution has been shaped by environmental pressures such as climate, food availability, disease, and social competition. In the modern technological era, however, a new class of environmental influence has emerged: cognitive-technological environments.

Artificial intelligence systems, neurotechnology, and brain–computer interfaces may gradually become part of the human cognitive ecosystem. If this integration becomes widespread and persistent, it may influence the trajectory of human cognitive development over long time horizons.

This annex presents a scenario-based evolutionary model, exploring possible pathways for hybrid cognition between the 22nd and 25th centuries. The purpose is not to predict the future but to outline plausible trajectories that can inform research and technological design.

2. Evolutionary Framework

Three evolutionary mechanisms are relevant when considering hybrid cognitive systems:

2.1 Biological Evolution

Changes in genetic structure across generations through mutation and natural selection.

Timescale: tens of thousands of years.

Because of the long timescales involved, biological evolution alone is unlikely to account for major cognitive transformations within a few centuries.

2.2 Cultural Evolution

Transmission of knowledge, practices, and technologies across generations.

Timescale: decades to centuries.

Cultural evolution has historically produced dramatic transformations in human society, including the development of language, science, and digital technology.

2.3 Technological Evolution

Rapid innovation cycles that reshape the environment in which human cognition operates.

Timescale: years to decades.

Hybrid cognition would primarily emerge through technological and cultural evolution, rather than immediate biological mutation.

3. Hybrid Cognitive Environments

The concept of hybrid cognitive environments refers to settings in which human cognitive processes interact continuously with computational systems.

Examples may include:

- neuroadaptive learning systems

- brain–computer interfaces

- AI-assisted cognitive augmentation tools

- immersive augmented reality cognitive spaces.

Over long periods, individuals raised within such environments may develop cognitive habits and neural patterns that differ from those of earlier generations.

4. Scenario Model: Four Evolutionary Phases

The transition toward hybrid cognition can be conceptualized as occurring in four broad phases.

Phase I

Early Hybrid Interaction

Late 21st – Early 22nd Century

During this phase, hybrid cognition remains experimental and limited to specific domains.

Key developments may include:

- advanced brain–computer interfaces

- AI-assisted neurofeedback systems

- cognitive augmentation tools for learning and productivity

- integration of contemplative cognitive training with neurotechnology.

Human cognition remains fundamentally biological, but technological systems increasingly assist with tasks such as:

- memory retrieval

- information synthesis

- decision support.

Hybrid interaction is episodic rather than continuous.

Phase II

Adaptive Cognitive Augmentation

Mid–22nd Century

Hybrid cognitive systems become more widely adopted.

Key characteristics may include:

- continuous AI-assisted learning environments

- neural monitoring systems that adapt digital environments to cognitive states

- large-scale integration of cognitive augmentation tools in education.

At this stage, human brains may undergo long-term neuroplastic adaptation to environments in which:

- information retrieval is partially externalized

- cognitive load is distributed across biological and computational systems

- attentional training becomes a central educational skill.

This phase represents the stabilization of hybrid cognitive practices within society.

Phase III

Integrated Hybrid Cognition

23rd–24th Centuries

In this phase, hybrid cognition becomes deeply embedded in everyday life.

Possible developments include:

- seamless interaction between biological cognition and AI systems

- persistent external memory systems integrated with personal cognitive workflows

- large-scale collaborative human–AI cognitive networks.

The brain may adapt to operate in environments where:

- knowledge is dynamically accessed rather than memorized

- decision-making occurs through hybrid consultation with AI systems

- complex problem solving occurs through distributed human–AI networks.

Cognition becomes increasingly networked and collaborative.

Phase IV

Mature Hybrid Cognitive Ecosystems

24th–25th Centuries

Hybrid cognition may eventually reach a stage where human intelligence operates within large-scale cognitive ecosystems composed of both biological and artificial components.

Possible features include:

- globally interconnected cognitive infrastructures

- advanced neural interfaces enabling low-friction interaction with computational systems

- dynamic knowledge networks linking individuals and AI systems.

At this stage, human cognition may function less as an isolated process and more as a node within a distributed intelligence network.

However, biological cognition remains the origin of:

- subjective experience

- creativity

- ethical reasoning

- intentionality.

Thus, even in mature hybrid systems, human cognition would likely retain a central role.

5. Potential Neural Adaptations

Over centuries of exposure to hybrid cognitive environments, long-term neuroplastic adaptations may occur.

Possible areas of change include:

Attentional Networks

Improved stability and control of attentional systems, particularly in prefrontal and parietal networks.

Meta-Cognitive Monitoring

Enhanced ability to monitor and regulate internal cognitive processes.

Information Integration

Stronger functional connectivity across distributed cortical networks.

Cognitive Flexibility

Greater ability to shift between tasks and integrate diverse streams of information.

These changes would most likely occur through developmental plasticity rather than genetic mutation.

6. Educational Implications

Hybrid cognition would likely transform educational systems.

Future education may include:

- attentional training and contemplative practices

- AI-assisted adaptive learning environments

- hybrid problem-solving exercises combining human intuition and AI analysis.

In such systems, cognitive training may focus less on memorization and more on:

- critical reasoning

- creative synthesis

- collaborative intelligence.

7. Risks and Uncertainties

Hybrid cognitive evolution may also introduce challenges.

Potential risks include:

- overdependence on technological systems

- cognitive inequality between augmented and non-augmented populations

- privacy concerns related to neural data

- potential erosion of cognitive autonomy.

Addressing these risks would require robust ethical frameworks and governance structures.

8. Conclusion

Hybrid General Intelligence systems represent a possible future stage in the long relationship between human cognition and technological environments.

Rather than producing immediate biological mutation, the more plausible pathway involves progressive cognitive adaptation through cultural and technological evolution.

Over centuries, sustained interaction between human neural systems and artificial intelligence may lead to new forms of hybrid cognition, reshaping how humans learn, collaborate, and solve complex problems.

Future research should therefore explore hybrid intelligence not only as a technological challenge but also as a long-term evolutionary phenomenon within the cognitive ecosystem of human civilization.