Positioning Statement

Hyperlogy is an advanced cognitive-structural framework derived from Metalogy.

It is designed to process reality without dualistic fragmentation, internal contradiction, or symbolic dependency.

It is not a religion.

It is not a mystical doctrine.

It is not a belief system.

It is a methodological architecture for coherent cognition.

1. Strategic Context

Across contemplative traditions, particularly Buddhism, liberation has been framed as release from Samsara — the cycle of suffering generated by ignorance and ego-identification.

However, most historical methods rely on:

- Symbolic cosmology

- Ritual repetition

- Conceptual metaphysics

- Authority-based transmission

- Paradoxical doctrines (e.g., self vs no-self)

While these systems have psychological and ethical value, they frequently leave unresolved structural contradictions within cognition itself.

Hyperlogy begins from a different premise:

The primary prison is not metaphysical.

It is structural — embedded in the architecture of cognitive processing.

2. Core Concept: What Is Hyperlogy?

2.1 Definition

Hyperlogy is a refined metalogical processing system designed to eliminate dualistic contradiction within perception, reasoning, and identity formation.

It functions as:

- A contradiction-detection mechanism

- A coherence-maximization method

- A non-dual processing architecture

- A self-referential logical stabilizer

Hyperlogy does not introduce new beliefs.

It restructures how cognition operates.

3. The Structural Problem: Dualistic Fragmentation

Most human cognitive suffering arises from recursive dualisms:

- self vs world

- ego vs liberation

- permanence vs impermanence

- sacred vs profane

- good vs evil (as metaphysical absolutes)

- material vs spiritual

These binary oppositions produce:

- Cognitive dissonance

- Identity instability

- Emotional volatility

- Conceptual loops

- Endless doctrinal debates

Hyperlogy identifies these not as metaphysical truths, but as processing artifacts.

4. Reframing Samsara (Non-Mystical Interpretation)

Traditional interpretation:

Samsara = cosmic cycle of rebirth.

Hyperlogical reinterpretation:

Samsara = recursive cognitive distortion loop driven by dualistic self-processing.

Under this model:

- Rebirth = reactivation of identity constructs

- Karma = feedback loops of unresolved contradiction

- Liberation = structural reconfiguration of cognitive processing

This reframing removes metaphysical speculation and converts liberation into a cognitive engineering problem.

5. Why Traditional Practices Often Plateau

Hyperlogy does not dismiss meditation, ethical conduct, or contemplative discipline.

However, historical systems frequently encounter a paradox:

- The ego seeks liberation from the ego.

- The self attempts to eliminate the self.

- The mind tries to transcend mind through conceptual effort.

This recursive structure can generate:

- Identity inflation

- Spiritual bypassing

- Symbolic dependency

- Endless practice without structural resolution

Hyperlogy targets the contradiction directly rather than symbolically.

6. Hyperlogy and Awakening (Technical Interpretation)

In this model, awakening is not:

- Mystical absorption

- Emotional ecstasy

- Supersensory perception

- Religious confirmation

It is defined as:

Stable non-contradictory cognitive processing across perceptual layers.

Indicators include:

- Reduced internal paradox loops

- Reduced ego-reinforcement reflex

- Stable identity fluidity

- Emotional homeostasis

- Non-reactive clarity

This reframes awakening as measurable structural stability.

7. Peace vs Happiness (Clarified)

Hyperlogy distinguishes:

- Happiness → comparative state (pleasure vs pain)

- Peace → non-dual baseline stability independent of polarity

Happiness is oscillatory.

Peace is structural.

Hyperlogy optimizes for structural peace, not emotional intensity.

8. Elimination of Dependency

Hyperlogy explicitly rejects:

- Guru dependency

- Authority validation

- Institutional exclusivity

- Salvation promises

- Mystical superiority claims

It proposes:

No external mediator is required to restructure cognition.

This is not anti-tradition.

It is post-dependency.

9. Scientific Compatibility

Hyperlogy is compatible with:

- Cognitive neuroscience

- Systems theory

- Information theory

- Predictive processing models

- AI alignment frameworks

Potential research directions:

- Contradiction-density metrics

- Recursive identity loop modeling

- Stability markers in neural coherence

- Cognitive entropy reduction measures

Hyperlogy becomes credible only through operational modeling and empirical study.

10. Institutional and Commercial Relevance

Hyperlogy has applications in:

Leadership & Governance

- Reduction of ideological polarization

- Non-dual strategic analysis

- Ethical decision coherence

AI & Alignment

- Contradiction minimization in large-scale systems

- Harm-reduction constraint modeling

- Non-authoritarian control architectures

Mental Health

- Reduction of cognitive dissonance

- Ego reactivity stabilization

- Structured identity fluidity training

Education

- Post-dual logic training

- Systems-level reasoning

- Coherence-based curriculum design

11. What Hyperlogy Does NOT Claim

To maintain intellectual integrity, Hyperlogy does not claim:

- Final metaphysical revelation

- Infallibility

- Guaranteed emotional perfection

- Supernatural states

- Immediate global transformation

- Elimination of all human suffering

It claims only:

A superior contradiction-resolution framework may reduce internal fragmentation.

12. Comparative Positioning

| Traditional Doctrine | Hyperlogy |

|---|---|

| Belief-centered | Processing-centered |

| Authority-based | Self-verifiable |

| Symbolic cosmology | Structural modeling |

| Ritual | Cognitive engineering |

| Liberation as event | Liberation as reconfiguration |

13. Strategic Closing Statement

Hyperlogy does not ask for faith.

It does not demand allegiance.

It does not promise transcendence.

It proposes something simpler and more demanding:

Remove contradiction from cognition.

Stabilize perception without dual distortion.

Operate from structural coherence.

If implemented rigorously, the result is not mysticism.

It is clarity.

HYPERLOGY

A Formal Metalogical Framework and Neurocognitive Architecture for Non-Contradictory Processing

Document Type: Technical White Paper

Scope: Formal logic structures + neurocognitive modeling

Positioning: Non-dogmatic, scientific, operational, falsifiable

ABSTRACT

This document presents Hyperlogy as a formal metalogical architecture designed to minimize cognitive contradiction and stabilize perception across recursive identity loops. The framework integrates:

- A formal logical layer (contradiction-minimization systems, meta-constraint modeling, coherence operators).

- A neurocognitive layer (predictive processing, free-energy reduction, identity-loop stabilization).

- An AI-alignment analog (coherence-constrained inference systems).

Hyperlogy does not assert metaphysical conclusions. It proposes a structured method to:

- Reduce dualistic cognitive fragmentation.

- Model Samsara-like recursive loops as self-referential prediction errors.

- Define liberation as structural stabilization of inference processes.

The framework is mathematically expressible, empirically testable, and compatible with systems neuroscience.

PART I — FORMAL LOGIC STRUCTURES

1. Foundational Definitions

Let:

- S = cognitive system

- Ω = perceptual state space

- P(ω) = probabilistic belief distribution over states

- C = contradiction operator

- L = base logic (classical, modal, or probabilistic)

- M = metalogical constraint layer

- H = coherence functional

Hyperlogy introduces a meta-constraint layer over L.

2. Dualistic Contradiction Formalization

In classical cognition:A∧¬A

induces logical collapse.

In human cognition, contradictions are tolerated locally but generate:

- Increased cognitive load

- Emotional instability

- Identity defense loops

Define contradiction density:DC=i=1∑nwi⋅I(ϕi∧¬ϕi)

Where:

- I is an indicator of inconsistency

- wi weights belief importance

Hyperlogy seeks:minDC

across hierarchical inference layers.

3. Hyperlogical Coherence Operator

Define coherence functional:H(P)=−∫P(ω)logP(ω)dω+λS(P)

Where:

- First term: entropy

- S(P): structural stability metric

- λ: weighting constant

Hyperlogy optimizes:maxH(P)subject to meta-constraints

Meta-constraints include:

- Non-self-exclusion constraint

- Recursive identity stabilization

- Dual-collapse elimination

4. Recursive Identity Loop Modeling

Define identity construct:It=f(It−1,Pt,Et)

Where:

- Et = error signal

- Pt = belief update

In ordinary cognition:Et→defensivereinforcement

In hyperlogical processing:Et→structuralrevision

Stability condition:t→∞limVar(It)→ϵ

Where ϵ approaches low variance identity stability without rigidity.

5. Samsara as Recursive Error Accumulation

Let predictive error:ϵt=Ot−O^t

Standard egoic system:ϵt→self−referentialreinforcement

Hyperlogical system:ϵt→modelrevision

Samsara modeled as:t=0∑∞ϵtself−loop

Liberation defined as:ϵtself−loop→0

6. Meta-Law Hierarchy

Hyperlogy posits layered constraints:

Level 0: Classical inference

Level 1: Consistency preservation

Level 2: Cross-layer coherence

Level 3: Identity decoupling

Level 4: Non-dual processing symmetry

Formal condition:Mn+1(Ln)

Meta-layer governs base-layer permissible structures.

PART II — NEUROCOGNITIVE MODELING EXPANSION

7. Predictive Coding Integration

Brain modeled as Bayesian inference machine:P(H∣D)=P(D)P(D∣H)P(H)

Dualistic instability arises when:

- Prior rigidity high

- Prediction error suppressed

- Identity tied to priors

Hyperlogy modifies:P(H)→adaptivepriorfield

Reduces identity-weighted prior rigidity.

8. Free Energy Reformulation

Using Friston’s formulation:F=Eq[logq(z)−logp(x,z)]

Hyperlogy modifies identity attachment parameter α:Fhyper=F−αC(I)

Where:

- C(I) = identity rigidity measure

- Decreasing α reduces defensive loops

9. Neural Correlates (Hypothesized)

Hyperlogical stabilization predicts:

- Reduced DMN overactivation (medial prefrontal cortex)

- Increased global integration (fronto-parietal coherence)

- Reduced amygdala hyperreactivity

- Increased gamma synchrony during non-dual states

These are testable.

10. Contradiction Density and Neural Stress

High DC correlates with:

- Elevated cortisol

- Increased anterior cingulate conflict activation

- Rumination loops

Hyperlogy predicts:DC↓⇒ACC activation stabilizes

11. Emotional Stabilization Mechanism

Emotions modeled as:E=f(ϵt,It)

Where ego-involvement amplifies error.

Hyperlogical processing reduces:∂I∂E

Emotional volatility decreases as identity rigidity decreases.

12. AI Alignment Analog

Define AI policy π.

Introduce coherence constraint:π∗=argmin(L(π)+βDC(π))

Where:

- L(π) = task loss

- DC(π) = internal contradiction measure

- β = coherence weighting

This produces non-manipulative, stable systems.

13. Testable Hypotheses

- Structured contradiction reduction training reduces rumination.

- Identity decoupling lowers stress biomarkers.

- Coherence metrics predict long-term emotional stability.

- Hyperlogical training improves cross-ideological tolerance.

14. Limitations

Hyperlogy does not claim:

- elimination of biological mortality

- elimination of all suffering

- metaphysical proof

- omniscient cognition

It is a structural processing model.

15. Strategic Implications

Hyperlogy may function as:

- A cognitive stabilization protocol.

- A non-sectarian awakening model.

- An AI-alignment coherence architecture.

- A governance conflict-reduction system.

16. Conclusion

Hyperlogy is best understood as:

A metalogical architecture for minimizing contradiction density in recursive identity-based inference systems.

It integrates:

- Formal logic

- Information theory

- Predictive processing

- Systems neuroscience

Its value depends entirely on empirical validation and operational deployment.

HYPERLOGY

A Formal Metalogical Framework and Neurocognitive Architecture for Non-Contradictory Processing

Document Type: Technical White Paper

Scope: Formal logic structures + neurocognitive modeling

Positioning: Non-dogmatic, scientific, operational, falsifiable

ABSTRACT

This document presents Hyperlogy as a formal metalogical architecture designed to minimize cognitive contradiction and stabilize perception across recursive identity loops. The framework integrates:

- A formal logical layer (contradiction-minimization systems, meta-constraint modeling, coherence operators).

- A neurocognitive layer (predictive processing, free-energy reduction, identity-loop stabilization).

- An AI-alignment analog (coherence-constrained inference systems).

Hyperlogy does not assert metaphysical conclusions. It proposes a structured method to:

- Reduce dualistic cognitive fragmentation.

- Model Samsara-like recursive loops as self-referential prediction errors.

- Define liberation as structural stabilization of inference processes.

The framework is mathematically expressible, empirically testable, and compatible with systems neuroscience.

PART I — FORMAL LOGIC STRUCTURES

1. Foundational Definitions

Let:

- S = cognitive system

- Ω = perceptual state space

- P(ω) = probabilistic belief distribution over states

- C = contradiction operator

- L = base logic (classical, modal, or probabilistic)

- M = metalogical constraint layer

- H = coherence functional

Hyperlogy introduces a meta-constraint layer over L.

2. Dualistic Contradiction Formalization

In classical cognition:A∧¬A

induces logical collapse.

In human cognition, contradictions are tolerated locally but generate:

- Increased cognitive load

- Emotional instability

- Identity defense loops

Define contradiction density:DC=i=1∑nwi⋅I(ϕi∧¬ϕi)

Where:

- I is an indicator of inconsistency

- wi weights belief importance

Hyperlogy seeks:minDC

across hierarchical inference layers.

3. Hyperlogical Coherence Operator

Define coherence functional:H(P)=−∫P(ω)logP(ω)dω+λS(P)

Where:

- First term: entropy

- S(P): structural stability metric

- λ: weighting constant

Hyperlogy optimizes:maxH(P)subject to meta-constraints

Meta-constraints include:

- Non-self-exclusion constraint

- Recursive identity stabilization

- Dual-collapse elimination

4. Recursive Identity Loop Modeling

Define identity construct:It=f(It−1,Pt,Et)

Where:

- Et = error signal

- Pt = belief update

In ordinary cognition:Et→defensivereinforcement

In hyperlogical processing:Et→structuralrevision

Stability condition:t→∞limVar(It)→ϵ

Where ϵ approaches low variance identity stability without rigidity.

5. Samsara as Recursive Error Accumulation

Let predictive error:ϵt=Ot−O^t

Standard egoic system:ϵt→self−referentialreinforcement

Hyperlogical system:ϵt→modelrevision

Samsara modeled as:t=0∑∞ϵtself−loop

Liberation defined as:ϵtself−loop→0

6. Meta-Law Hierarchy

Hyperlogy posits layered constraints:

Level 0: Classical inference

Level 1: Consistency preservation

Level 2: Cross-layer coherence

Level 3: Identity decoupling

Level 4: Non-dual processing symmetry

Formal condition:Mn+1(Ln)

Meta-layer governs base-layer permissible structures.

PART II — NEUROCOGNITIVE MODELING EXPANSION

7. Predictive Coding Integration

Brain modeled as Bayesian inference machine:P(H∣D)=P(D)P(D∣H)P(H)

Dualistic instability arises when:

- Prior rigidity high

- Prediction error suppressed

- Identity tied to priors

Hyperlogy modifies:P(H)→adaptivepriorfield

Reduces identity-weighted prior rigidity.

8. Free Energy Reformulation

Using Friston’s formulation:F=Eq[logq(z)−logp(x,z)]

Hyperlogy modifies identity attachment parameter α:Fhyper=F−αC(I)

Where:

- C(I) = identity rigidity measure

- Decreasing α reduces defensive loops

9. Neural Correlates (Hypothesized)

Hyperlogical stabilization predicts:

- Reduced DMN overactivation (medial prefrontal cortex)

- Increased global integration (fronto-parietal coherence)

- Reduced amygdala hyperreactivity

- Increased gamma synchrony during non-dual states

These are testable.

10. Contradiction Density and Neural Stress

High DC correlates with:

- Elevated cortisol

- Increased anterior cingulate conflict activation

- Rumination loops

Hyperlogy predicts:DC↓⇒ACC activation stabilizes

11. Emotional Stabilization Mechanism

Emotions modeled as:E=f(ϵt,It)

Where ego-involvement amplifies error.

Hyperlogical processing reduces:∂I∂E

Emotional volatility decreases as identity rigidity decreases.

12. AI Alignment Analog

Define AI policy π.

Introduce coherence constraint:π∗=argmin(L(π)+βDC(π))

Where:

- L(π) = task loss

- DC(π) = internal contradiction measure

- β = coherence weighting

This produces non-manipulative, stable systems.

13. Testable Hypotheses

- Structured contradiction reduction training reduces rumination.

- Identity decoupling lowers stress biomarkers.

- Coherence metrics predict long-term emotional stability.

- Hyperlogical training improves cross-ideological tolerance.

14. Limitations

Hyperlogy does not claim:

- elimination of biological mortality

- elimination of all suffering

- metaphysical proof

- omniscient cognition

It is a structural processing model.

15. Strategic Implications

Hyperlogy may function as:

- A cognitive stabilization protocol.

- A non-sectarian awakening model.

- An AI-alignment coherence architecture.

- A governance conflict-reduction system.

16. Conclusion

Hyperlogy is best understood as:

A metalogical architecture for minimizing contradiction density in recursive identity-based inference systems.

It integrates:

- Formal logic

- Information theory

- Predictive processing

- Systems neuroscience

Its value depends entirely on empirical validation and operational deployment.

If you wish, I can now extend this into:

- A full mathematical appendix (operator algebra, category-theoretic formalization)

- A clinical psychiatry protocol translation

- A DARPA-style technical brief

- A simulation-ready computational model

- A full institutional submission format (journal-ready with citations structure)

A simulation-ready computational model

"""

Hyperlogy Simulation-Ready Computational Model (HRF: Hyperlogical Recursive Framework)

====================================================================================Goal

----

Simulate a cognitive agent that updates beliefs under sensory input, while:

1) Minimizing prediction error (standard predictive processing)

2) Minimizing "contradiction density" across its belief graph

3) Stabilizing identity as a slow latent variable without rigid ego-lockThis is a self-contained model you can run as-is (Python 3.10+).

It produces:

- time series metrics (prediction error, contradiction density, identity rigidity, free-energy proxy)

- optional plots

- optional batch experiments for parameter sweepsKey Ideas (operational, not metaphysical)

-----------------------------------------

- Beliefs are probabilities over binary propositions.

- Contradictions arise when a proposition and its negation are both held strongly.

- Identity rigidity is modeled as resistance to updating high-salience beliefs.

- Hyperlogical regulation adds a penalty term that discourages contradictory strong beliefs.

"""from __future__ import annotationsfrom dataclasses import dataclass, asdict

from typing import Dict, List, Tuple, Callable, Optional

import math

import randomimport numpy as np# ----------------------------

# Utilities

# ----------------------------def sigmoid(x: float) -> float:

return 1.0 / (1.0 + math.exp(-x))def clamp(x: float, lo: float = 1e-6, hi: float = 1.0 - 1e-6) -> float:

return max(lo, min(hi, x))def bernoulli(p: float) -> int:

return 1 if random.random() < p else 0# ----------------------------

# Model definitions

# ----------------------------@dataclass

class Proposition:

"""

A proposition 'A' with belief p(A)=b in [0,1], and salience s>=0

salience influences identity rigidity (resistance to change).

"""

name: str

belief: float

salience: float@dataclass

class HyperlogyParams:

"""

Parameters controlling the agent dynamics.

"""

# Predictive processing

lr: float = 0.15 # learning rate for belief updates

obs_noise: float = 0.12 # observation noise (higher => weaker evidence)

prior_strength: float = 0.8 # how strongly priors resist updates (baseline) # Hyperlogical regulation

beta_contra: float = 1.0 # weight for contradiction penalty

contra_sharpness: float = 8.0 # how sharply contradictions are penalized # Identity dynamics

id_lr: float = 0.03 # identity update rate

id_rigidity: float = 1.2 # multiplies resistance on high-salience props

id_target_var: float = 0.02 # target long-run identity variance (lower => more stable) # Environment coupling

env_flip_prob: float = 0.03 # probability environment latent flips # Randomness control

seed: int = 7@dataclass

class Metrics:

t: int

pred_error: float

contradiction_density: float

identity_rigidity: float

free_energy_proxy: floatclass BeliefGraph:

"""

A belief graph holds propositions and explicit contradiction links:

- each proposition A has an associated not-A node internally (derived)

Contradiction density is high if both A and ~A have high belief simultaneously.

"""

def __init__(self, props: List[Proposition], contradiction_pairs: List[Tuple[str, str]]):

self.props: Dict[str, Proposition] = {p.name: p for p in props}

self.contradiction_pairs = contradiction_pairs # (A, notA) or (A, B) that are mutually exclusive def get_belief(self, name: str) -> float:

return self.props[name].belief def set_belief(self, name: str, value: float) -> None:

self.props[name].belief = clamp(value) def contradiction_density(self) -> float:

"""

For each mutually exclusive pair (A,B), define contradiction as:

c = sigma(k*(bA + bB - 1)) (high when both are simultaneously high)

Weighted by salience.

"""

total = 0.0

for a, b in self.contradiction_pairs:

ba = self.get_belief(a)

bb = self.get_belief(b)

sa = self.props[a].salience

sb = self.props[b].salience

w = 0.5 * (sa + sb)

# soft contradiction: increases when ba+bb > 1

c = sigmoid(self._k * ((ba + bb) - 1.0))

total += w * c

return total @property

def _k(self) -> float:

# will be overwritten by agent params in runtime

return getattr(self, "__k", 8.0) @_k.setter

def _k(self, val: float) -> None:

setattr(self, "__k", float(val)) def snapshot(self) -> Dict[str, float]:

return {k: v.belief for k, v in self.props.items()}class Environment:

"""

Simple environment with a latent binary state for each proposition.

Observations are noisy samples of those latents.

"""

def __init__(self, prop_names: List[str], flip_prob: float):

self.latent: Dict[str, int] = {n: bernoulli(0.5) for n in prop_names}

self.flip_prob = flip_prob def step(self) -> None:

for k in self.latent:

if random.random() < self.flip_prob:

self.latent[k] = 1 - self.latent[k] def observe(self, name: str, obs_noise: float) -> float:

"""

Return an observation likelihood-coded value in [0,1].

If latent is 1, we observe ~Bernoulli(1-obs_noise).

If latent is 0, we observe ~Bernoulli(obs_noise).

"""

z = self.latent[name]

p = (1.0 - obs_noise) if z == 1 else obs_noise

return float(bernoulli(p))class HyperlogyAgent:

"""

Agent uses:

- predictive error minimization: update beliefs toward observations

- contradiction penalty: discourages holding mutually exclusive beliefs strongly

- identity rigidity: slows updates on high-salience propositions when identity is rigid

"""

def __init__(self, graph: BeliefGraph, params: HyperlogyParams):

self.g = graph

self.p = params

random.seed(self.p.seed)

np.random.seed(self.p.seed) # identity is a scalar in [0,1] here (extendable to vector)

# Higher => more rigid/self-protective updating; lower => more flexible.

self.identity = 0.5 # configure graph contradiction sharpness

self.g._k = self.p.contra_sharpness def _identity_rigidity(self) -> float:

# A simple mapping: rigidity grows with identity value

return 1.0 + self.p.id_rigidity * self.identity def _free_energy_proxy(self, pred_err: float, contra: float) -> float:

# proxy: error + weighted contradiction + identity cost (rigidity too high)

id_cost = (self.identity - 0.5) ** 2

return pred_err + self.p.beta_contra * contra + 0.2 * id_cost def step(self, env: Environment) -> Metrics:

"""

One timestep:

- environment evolves

- agent observes

- agent updates beliefs (gradient-like)

- agent updates identity toward variance target (stability without rigidity)

"""

env.step() # Collect observations and update beliefs

pred_errs = [] # First compute contradiction gradients approximately (pairwise penalty)

# We'll compute for each proposition a "contra force" pushing belief down

contra_force: Dict[str, float] = {name: 0.0 for name in self.g.props} for a, b in self.g.contradiction_pairs:

ba = self.g.get_belief(a)

bb = self.g.get_belief(b)

# penalty increases when ba+bb>1

x = (ba + bb) - 1.0

c = sigmoid(self.p.contra_sharpness * x)

# gradient of sigmoid(kx) wrt ba is k*c*(1-c)

grad = self.p.contra_sharpness * c * (1.0 - c)

# push both down proportionally

contra_force[a] += grad

contra_force[b] += grad rigidity = self._identity_rigidity() for name, prop in self.g.props.items():

obs = env.observe(name, self.p.obs_noise) b = prop.belief

# prediction error for binary observation

err = (obs - b)

pred_errs.append(abs(err)) # baseline update: move toward observation

delta = self.p.lr * err # prior resistance: smaller updates as prior_strength rises

delta *= (1.0 - 0.5 * self.p.prior_strength) # identity rigidity: high salience beliefs update slower when rigid

salience_factor = 1.0 / (1.0 + rigidity * prop.salience) # hyperlogical contradiction penalty: subtract a small term

contra_term = self.p.beta_contra * contra_force[name] # combine

new_b = b + salience_factor * (delta - 0.02 * contra_term) self.g.set_belief(name, new_b) pred_error = float(np.mean(pred_errs))

contra = float(self.g.contradiction_density()) # Update identity:

# - If contradiction is high, identity rigidity tends to increase defensively in ordinary systems.

# - Hyperlogy target: keep identity stable but not rigid; we nudge identity toward mid if too volatile.

# We'll approximate "volatility" by prediction error + contradiction.

volatility = pred_error + contra # Desire: lower volatility => identity can relax; higher => identity may tighten slightly,

# but with a stabilizer that pulls toward 0.5 to avoid runaway ego-lock.

id_delta = self.p.id_lr * (0.15 * volatility - 0.10 * (self.identity - 0.5))

self.identity = clamp(self.identity + id_delta, 0.0, 1.0) fe = self._free_energy_proxy(pred_error, contra) # time index is managed by runner; we set placeholder here

return Metrics(t=-1, pred_error=pred_error, contradiction_density=contra,

identity_rigidity=rigidity, free_energy_proxy=float(fe))# ----------------------------

# Runner / Experiment

# ----------------------------def run_simulation(

T: int = 300,

params: HyperlogyParams = HyperlogyParams(),

plot: bool = True

) -> Tuple[List[Metrics], List[Dict[str, float]]]:

"""

Run a simulation for T steps.

Returns:

metrics_list: list of Metrics

belief_snapshots: list of belief dictionaries

"""

random.seed(params.seed)

np.random.seed(params.seed) # Example proposition set (extend freely):

# A, B, C could be mutually exclusive pairs with not-A style alternatives.

props = [

Proposition("A", belief=0.5, salience=1.0),

Proposition("notA", belief=0.5, salience=1.0),

Proposition("B", belief=0.5, salience=0.8),

Proposition("notB", belief=0.5, salience=0.8),

Proposition("C", belief=0.5, salience=0.6),

Proposition("notC", belief=0.5, salience=0.6),

] # Mutual exclusivity constraints (A vs notA etc.)

contradiction_pairs = [("A", "notA"), ("B", "notB"), ("C", "notC")] g = BeliefGraph(props, contradiction_pairs)

agent = HyperlogyAgent(g, params)

env = Environment([p.name for p in props], flip_prob=params.env_flip_prob) metrics_list: List[Metrics] = []

snaps: List[Dict[str, float]] = [] for t in range(T):

m = agent.step(env)

m.t = t

metrics_list.append(m)

snaps.append(g.snapshot()) if plot:

try:

import matplotlib.pyplot as plt ts = [m.t for m in metrics_list]

pe = [m.pred_error for m in metrics_list]

cd = [m.contradiction_density for m in metrics_list]

ir = [m.identity_rigidity for m in metrics_list]

fe = [m.free_energy_proxy for m in metrics_list] plt.figure()

plt.plot(ts, pe)

plt.title("Prediction Error (mean abs)")

plt.xlabel("t"); plt.ylabel("error")

plt.show() plt.figure()

plt.plot(ts, cd)

plt.title("Contradiction Density")

plt.xlabel("t"); plt.ylabel("density")

plt.show() plt.figure()

plt.plot(ts, ir)

plt.title("Identity Rigidity")

plt.xlabel("t"); plt.ylabel("rigidity")

plt.show() plt.figure()

plt.plot(ts, fe)

plt.title("Free-Energy Proxy")

plt.xlabel("t"); plt.ylabel("F")

plt.show() except Exception as e:

print("Plotting failed (matplotlib missing or backend issue):", e) return metrics_list, snapsdef parameter_sweep(

T: int = 250,

betas: List[float] = [0.0, 0.5, 1.0, 2.0],

seeds: List[int] = [1, 2, 3]

) -> Dict[float, Dict[str, float]]:

"""

Sweep contradiction penalty weights and summarize outcomes.

Returns dict: beta -> summary stats

"""

results: Dict[float, Dict[str, float]] = {}

for beta in betas:

all_pe, all_cd, all_fe = [], [], []

for seed in seeds:

p = HyperlogyParams(beta_contra=beta, seed=seed)

metrics, _ = run_simulation(T=T, params=p, plot=False)

# discard burn-in

tail = metrics[int(0.5 * T):]

all_pe.append(np.mean([m.pred_error for m in tail]))

all_cd.append(np.mean([m.contradiction_density for m in tail]))

all_fe.append(np.mean([m.free_energy_proxy for m in tail])) results[beta] = {

"pred_error_mean": float(np.mean(all_pe)),

"contra_density_mean": float(np.mean(all_cd)),

"free_energy_mean": float(np.mean(all_fe)),

}

return resultsif __name__ == "__main__":

# Example run

metrics, snaps = run_simulation(T=400, plot=True) # Example sweep

sweep = parameter_sweep()

print("Sweep results:")

for beta, stats in sweep.items():

print(beta, stats)

What to tweak for your “Menu of Maitreya” production model

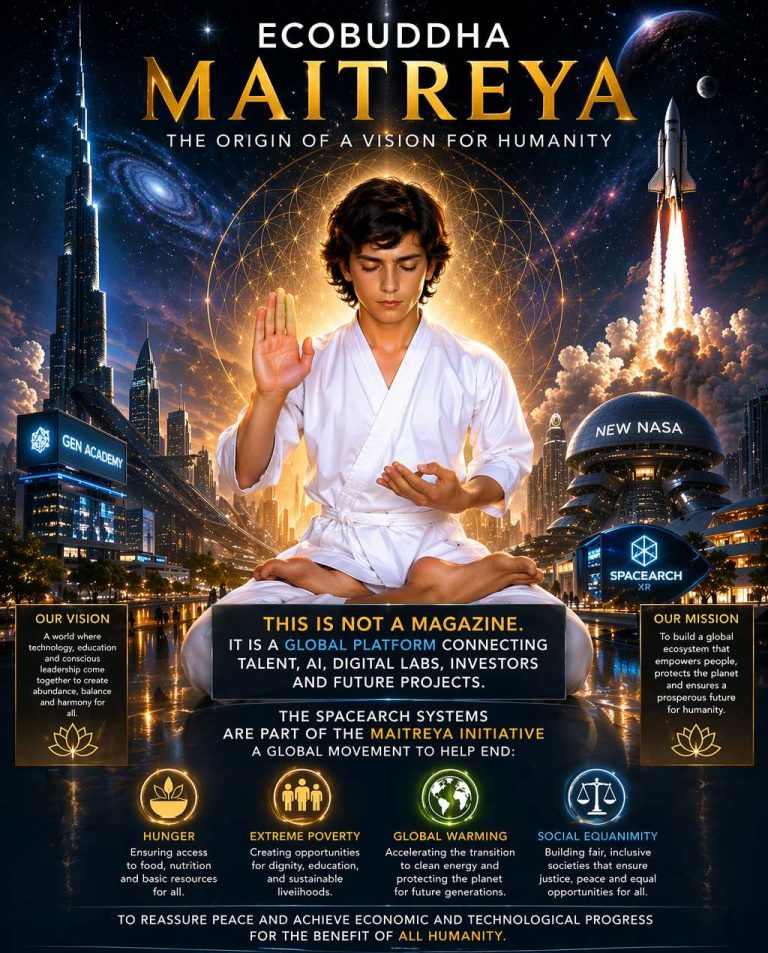

- Scale up propositions: replace A/notA etc. with a belief graph reflecting your conceptual taxonomy (e.g., dogma, evidence, compassion, identity narrative, harm-minimization).

- Replace env with scenario scripts: media exposure, debate inputs, trauma triggers, contemplative practice sessions.

- Upgrade identity to a vector (traits: rigidity, openness, compassion, threat-sensitivity).

- Add a “compassion regulator”: a penalty on harm-intent or hostility outputs, similar to contradiction penalty.