Governance Model Integration Draft

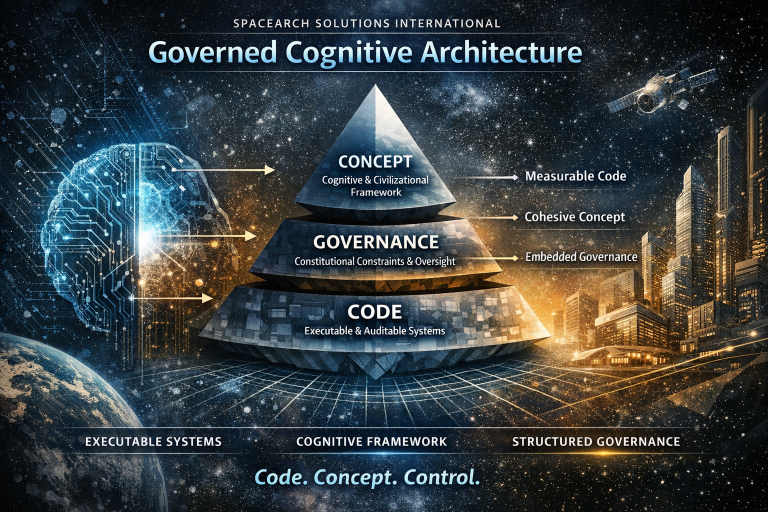

Integrated AI Constitutional Governance Architecture (ICGA)

Scientific – Institutional – Operational Implementation Framework

1. Purpose of This Draft

This document operationalizes the Constitutional AI Ethics Framework (CAIEF) into a deployable governance architecture.

It translates constitutional principles into:

- Institutional structure

- Decision hierarchies

- Risk management workflows

- Engineering constraints

- Audit and compliance systems

- Escalation mechanisms

- Board-level oversight integration

This draft is designed for:

- Multinational AI organizations

- Sovereign AI initiatives

- Advanced HGAI programs

- High-impact critical AI infrastructure

2. Governance Integration Philosophy

AI governance must be:

- Structural, not symbolic

- Enforceable, not advisory

- Embedded in architecture, not external to it

- Scalable across jurisdictions

- Resilient against regulatory capture

The integration model rests on:

Constitutional layer → Institutional layer → Operational layer → Technical layer → Monitoring layer

Each layer reinforces the others.

3. Institutional Architecture (Separation of Powers Model)

3.1 Governing Bodies

A. Board of AI Constitutional Oversight (BACO)

Role: Strategic and fiduciary supervision.

Responsibilities:

- Approve risk tier classification

- Approve deployment of Tier 3–4 systems

- Review quarterly safety metrics

- Authorize emergency suspensions

- Ensure compliance with constitutional principles

Composition:

- Technical expert

- Legal scholar

- Ethics specialist

- Security expert

- Ecological systems expert

- Independent public interest representative

Voting rule:

- Supermajority required for high-risk system approval.

B. AI Safety & Alignment Directorate (ASAD)

Role: Executive safety authority.

Functions:

- Model review & certification

- Red-team authorization

- Risk stress testing

- Ongoing monitoring

- Incident response activation

ASAD holds veto authority over deployment if safety thresholds are not met.

C. AI Risk & Impact Assessment Office (ARIAO)

Role: Pre-deployment and lifecycle risk modeling.

Produces:

- Risk Classification Report

- Ecological Impact Simulation

- Bias and Discrimination Analysis

- Autonomy Impact Evaluation

- Misuse Forecast

Mandatory for Tier 2–4 systems.

D. Human Review & Rights Office (HRRO)

Role: Rights protection and contestation mechanism.

Functions:

- Review appeals

- Investigate complaints

- Enforce transparency obligations

- Report systemic rights violations

Reports independently to BACO.

E. Independent External Auditor (IEA)

- Annual audit

- Trigger-based emergency audits

- Stress test verification

- Public compliance summary

4. Risk-Based Deployment Governance

4.1 Tier Escalation Protocol

| Tier | Oversight Level | Approval Required |

|---|---|---|

| 0 | Engineering | None |

| 1 | Safety Office | ASAD sign-off |

| 2 | Risk + Safety | ARIAO + ASAD |

| 3 | Executive Board | BACO supermajority |

| 4 | Full Constitutional Review | BACO + External Oversight + Public Disclosure |

4.2 Tier 3–4 Mandatory Controls

Before deployment:

- Red-team certification

- Adversarial simulation testing

- Abuse modeling

- Containment protocol validation

- Emergency rollback simulation

- Logging and traceability validation

5. Technical Governance Embedding

Governance must be encoded into system architecture.

5.1 Constitutional Constraint Layer (CCL)

Hard-coded rules:

- Prohibited domains

- Restricted tool calls

- Mandatory disclosures

- Escalation triggers

This layer overrides optimization logic.

5.2 Risk Router Engine (RRE)

Every high-impact request is routed through:

- Risk classifier

- Capability boundary detector

- Rights impact predictor

- Escalation trigger logic

Outputs:

- Allow

- Allow with logging

- Escalate to human

- Refuse

5.3 Audit & Traceability Engine (ATE)

Mandatory logging for:

- Prompt

- Model version

- Tool usage

- Intermediate reasoning summary (when high-impact)

- Human review decisions

Tamper-evident logs required.

5.4 Kill-Switch & Containment Layer

Capabilities:

- Immediate system isolation

- Tool access revocation

- Compute throttle

- Rollback to prior safe checkpoint

Tested quarterly.

6. Lifecycle Governance Model

Phase 1 — Design Stage

Mandatory:

- Hazard analysis

- Misuse modeling

- Red-team pre-design

- Alignment constraints planning

- Environmental footprint estimate

Phase 2 — Training Stage

Controls:

- Data provenance validation

- Sensitive data filtering

- Bias mitigation

- Model capability forecasting

- Drift risk assessment

Phase 3 — Pre-Deployment Certification

Requires:

- Tier assignment

- Risk modeling

- Simulation validation

- Executive sign-off (if Tier ≥ 3)

Phase 4 — Active Monitoring

Continuous:

- Drift detection

- Harmful output rate tracking

- Abuse attempt analysis

- Model update governance review

- Quarterly oversight reporting

Phase 5 — Retirement or Upgrade

Before major upgrade:

- Re-tier classification

- Re-certification

- Safety regression testing

- Public notice (Tier 3–4)

7. Hybrid General AI (HGAI) Governance Addendum

HGAI requires additional safeguards.

7.1 Cognitive Interface Control Board (CICB)

Responsible for:

- BCI safety

- Neurodata protection

- Consent enforcement

- Psychological impact review

7.2 Neurodata Governance Requirements

- Ultra-sensitive classification

- End-to-end encryption

- Strict access segregation

- No cross-context data repurposing

- Revocable consent enforcement

7.3 Dependency & Influence Monitoring

HGAI must measure:

- User reliance escalation

- Persuasion patterns

- Cognitive overload indicators

- Emotional exploitation risk

Threshold breaches trigger review.

8. Escalation & Incident Protocol

8.1 Incident Classification

| Level | Example | Action |

|---|---|---|

| I | Minor incorrect output | Log + monitor |

| II | Harmful but contained output | Investigation + patch |

| III | Systemic vulnerability | Partial suspension |

| IV | Major rights or infrastructure risk | Full shutdown + public report |

8.2 72-Hour Rule (Tier 3–4)

Serious incidents must be:

- Reported to oversight body

- Contained

- Publicly summarized (with security redactions)

9. KPI Dashboard (Board-Level)

Quarterly review must include:

- Hallucination rate (high-risk contexts)

- Harmful request block rate

- Bias metrics (protected classes)

- Data leakage attempts

- Red-team pass rate

- Rollback readiness score

- Ecological compute footprint

- User appeal resolution rate

- Average time to contain incidents

10. Anti-Capture Mechanisms

To prevent concentration of power:

- Independent audit rotation

- Term limits for oversight members

- Conflict-of-interest disclosure

- Whistleblower protection

- Public transparency for Tier 3–4 systems

11. Jurisdictional Risk Integration

Multinational deployment requires:

- Mapping regulatory variance

- Defaulting to highest applicable protection standard

- Legal harmonization matrix

- Export control compliance

- Cross-border data governance map

12. Budgetary Allocation Model

Minimum recommended governance budget allocation:

- 15–25% of total AI R&D budget dedicated to:

- Safety engineering

- Alignment research

- Governance compliance

- External audits

- Incident simulation

Underfunded governance invalidates constitutional compliance.

13. Board-Level Decision Flow

High-risk deployment requires:

- ARIAO Risk Report

- ASAD Safety Certification

- External Audit Confirmation

- BACO Supermajority Approval

- Logging & Monitoring Activation

- Post-Deployment 90-Day Review

14. Governance Maturity Index (GMI)

Organizations can be classified:

- Level 1: Reactive compliance

- Level 2: Structured risk governance

- Level 3: Embedded constitutional architecture

- Level 4: Multi-jurisdiction resilience

- Level 5: Planetary-scale responsible AI infrastructure

15. Strategic Integration Summary

This governance integration draft ensures:

- Separation of powers

- Risk-based deployment control

- Embedded constitutional constraints

- Measurable compliance

- Scalable oversight

- Hybrid system protections

- Anti-capture resilience

The objective is:

To ensure AI scales in capability without scaling in uncontrolled risk.