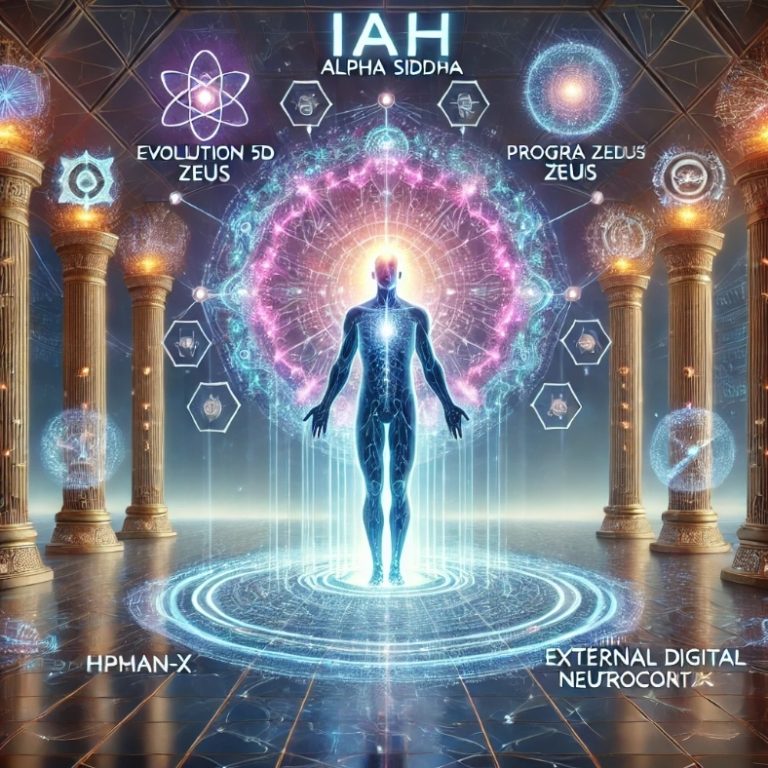

Hybrid Human–AI Cognitive Augmentation System

Concept Overview

The Human-X Beta BioSuit is a wearable human–AI hybrid augmentation platform designed to extend human cognitive, sensory, and analytical capacities through the integration of augmented reality interfaces, distributed processing systems, and AI coprocessing networks.

The system functions as an external digital neocortex, allowing the human user to interact with a large network of specialized artificial intelligences through natural cognitive signals such as focused thought, voice commands, eye tracking, and gesture inputs.

Unlike conventional wearable technologies that provide passive data displays or limited computational assistance, the Human-X Beta system is conceived as a full cognitive amplification architecture capable of transforming a single human operator into the equivalent of a distributed multidisciplinary research team.

This architecture represents a transitional stage toward Human–AI hybrid intelligence ecosystems, where the biological brain remains the central decision-making authority while artificial intelligence systems perform large-scale analysis, modeling, and optimization.

1. Strategic Objective

The primary objective of the Human-X Beta BioSuit is to enable the creation of Hybrid Cognitive Units (HCU) capable of solving complex scientific, engineering, economic, or strategic problems through real-time human–AI coprocessing.

The system is designed to:

• Expand human analytical bandwidth

• Accelerate multidisciplinary decision-making

• Enable real-time data mining and hypothesis testing

• Reduce cognitive bottlenecks in complex systems analysis

• Allow one operator to orchestrate large distributed AI networks

From an operational standpoint, the Human-X system transforms the user into a cognitive command node connected to a global AI knowledge network.

2. Core System Architecture

The Human-X Beta BioSuit consists of five integrated technological layers:

1. Wearable Cognitive Interface Layer

2. Exoskeletal Distributed Processing Layer

3. AI Coprocessing Network Layer

4. Holographic Knowledge Visualization Layer

5. Data Mining and Logical Validation Layer

Together, these layers form a closed feedback loop between biological cognition and artificial intelligence systems.

3. Wearable Cognitive Interface Layer

This layer allows the user to communicate with the AI system using natural cognitive and sensory inputs.

Augmented Reality Lenses

Advanced AR lenses or visor systems provide immersive visualization of data, models, and AI avatars within the user’s field of vision.

Capabilities include:

• Multi-layer data visualization

• Real-time scientific simulation display

• Interactive holographic dashboards

• Spatial representation of complex systems

This interface transforms information into visual cognitive objects, enabling faster comprehension and decision-making.

Digital Iris Tracking System

The digital iris interface tracks eye movement and focus points, allowing the system to interpret:

• attention direction

• object selection

• command confirmation

• cognitive emphasis signals

The iris interface functions as a low-latency neural-behavioral input channel.

Voice and Cognitive Command Interface

The Human-X system interprets commands through:

• voice recognition

• gesture recognition

• attention-based selection

• focused thought triggers (via neural inference models)

Through continuous learning, the system adapts to the user’s cognitive patterns, creating a personalized cognitive control interface.

4. Exoskeletal Distributed Processing Layer

The Human-X BioSuit incorporates a lightweight robotic exoskeleton framework containing distributed computational modules.

This exoskeleton performs several functions:

• supports sensors and processors

• distributes heat generated by computation

• integrates power systems

• stabilizes wearable hardware

• enables high-performance edge computing

Instead of relying entirely on cloud processing, the system uses local distributed processors embedded in the suit, reducing latency and improving responsiveness.

Embedded Processing Modules

Processing nodes are located throughout the suit structure:

• chest module — central processing coordinator

• shoulder nodes — neural interface processing

• spine module — power and thermal regulation

• forearm units — gesture recognition and interface control

These processors function as edge-computing clusters, enabling real-time interaction with AI systems.

5. External Digital Neocortex Concept

The Human-X system implements a portable digital neocortex.

In biological terms, the neocortex is responsible for:

• reasoning

• pattern recognition

• abstraction

• language processing

The Human-X architecture extends this capability externally by connecting the user to a large distributed network of specialized artificial intelligences.

This creates a hybrid cognitive structure composed of:

Human intuition + AI analytical power.

6. AI Coprocessing Network

At the core of the Human-X system is an AI Coprocessing Network composed of specialized AI agents.

The baseline architecture envisions a network of at least 1,000 specialized AI avatars, each trained in a specific domain.

Examples include:

• astrophysics AI

• climate modeling AI

• economic systems AI

• biomedical AI

• engineering optimization AI

• geopolitical analysis AI

Each AI operates as an expert node within the system.

7. Holographic AI Avatar Assembly

The AI network is represented visually as a holographic assembly of avatars, forming a virtual scientific council or digital agora.

Each AI avatar represents a domain-specific intelligence capable of:

• presenting analysis

• proposing hypotheses

• evaluating data models

• detecting logical inconsistencies

This structure creates a deliberative analytical environment, where multiple specialized AI systems collaborate simultaneously.

8. Scientific Data Mining Engine

The system integrates a data mining engine that continuously scans large data sources:

• scientific databases

• engineering datasets

• economic indicators

• satellite data

• research publications

The engine performs:

• pattern detection

• anomaly detection

• predictive modeling

• cross-domain correlation

Results are filtered through logical consistency validation algorithms.

9. Contradiction Elimination Model

One of the most important functions of the system is automatic elimination of contradictions.

The AI network compares hypotheses using:

• statistical validation

• logical consistency checks

• probabilistic simulations

• historical data verification

Models that contain contradictions or weak evidence are automatically downgraded or eliminated.

This produces progressively optimized analytical outcomes.

10. Hybrid Intelligence Workflow

The Human-X workflow follows a structured process:

1️⃣ Human formulates the initial hypothesis

2️⃣ AI network decomposes the problem

3️⃣ Specialized AI nodes analyze sub-domains

4️⃣ Data mining engines collect relevant datasets

5️⃣ Simulation models are generated

6️⃣ Contradictions are detected and filtered

7️⃣ The system produces optimized conclusions

8️⃣ The human operator validates final decisions

The final authority remains with the human decision maker.

11. Comparative Positioning

| Technology | Capability | Limitations |

|---|---|---|

| Smartphones | Information access | No cognitive augmentation |

| AR Glasses | Visualization | Limited processing |

| Wearable computers | Portable computing | Limited AI integration |

| Brain-computer interfaces | Neural control | Experimental |

| Human-X BioSuit | Full hybrid intelligence | Requires advanced integration |

The Human-X architecture represents a new category of technology: Hybrid Cognitive Systems.

12. Potential Applications

The Human-X system has applications in multiple sectors:

Scientific Research

Acceleration of multidisciplinary discovery.

Climate and Planetary Systems

Large-scale environmental modeling.

Strategic Decision Making

Government and geopolitical analysis.

Advanced Engineering

Real-time design optimization.

Aerospace Exploration

Support for astronauts and mission planning.

Medical Research

Rapid analysis of complex biomedical datasets.

13. Business and Industrial Potential

The Human-X platform could become the foundation for a new industry sector:

Cognitive Augmentation Technologies

Potential markets include:

• defense research

• advanced scientific laboratories

• large engineering companies

• strategic consulting

• space exploration agencies

Long-term, the technology could evolve into consumer cognitive augmentation systems.

14. Development Roadmap

Phase 1 — Concept Prototype

AR interface + AI copilot system.

Phase 2 — Wearable Integration

Lightweight exoskeleton and distributed processors.

Phase 3 — AI Assembly Network

Integration of large-scale specialized AI systems.

Phase 4 — Neural Interface

Advanced cognitive signal detection.

Phase 5 — Hybrid Intelligence Units

Full operational human–AI teams.

15. Strategic Significance

The Human-X BioSuit represents a step toward post-industrial cognitive infrastructure, where knowledge production is dramatically accelerated through hybrid intelligence systems.

If implemented successfully, the technology could:

• increase the speed of scientific discovery

• improve complex decision-making

• enable rapid innovation cycles

• reduce systemic errors caused by limited human processing capacity

Ultimately, the Human-X architecture may become one of the foundational technologies enabling Human–AI civilizational collaboration.

Human-X Beta BioSuit

Technical Engineering Specification

DARPA-Level Concept Architecture (SpaceArch Systems Division)

1. System Definition

The Human-X Beta BioSuit is a portable hybrid cognitive augmentation platform designed to integrate biological human intelligence with distributed artificial intelligence systems through a wearable cybernetic interface.

The system combines:

• Augmented reality visualization

• Distributed edge computing embedded in an exoskeleton

• AI coprocessing cloud networks

• biometric human-machine interfaces

• holographic multi-agent collaboration environments

The objective is to create a Human–AI Hybrid Cognitive Unit (HCU) capable of solving complex multidisciplinary problems with massively parallel analysis while maintaining human decision authority.

The Human-X Beta platform functions as a portable digital neocortex, enabling real-time interaction with a network of specialized AI agents.

2. Mission Profile

The system is designed for high-complexity environments where rapid analytical synthesis across multiple domains is required.

Primary operational domains include:

Advanced scientific research

strategic systems analysis

planetary environmental monitoring

complex engineering design

space mission support

large-scale data interpretation

A single Human-X operator can coordinate a distributed AI knowledge assembly equivalent to hundreds of domain specialists.

3. System Architecture Overview

The Human-X system consists of six integrated subsystems:

- Cognitive Interface System (CIS)

- Wearable Exoskeletal Hardware Platform (WEHP)

- Distributed Edge Processing Network (DEPN)

- AI Coprocessing Cloud (AICC)

- Holographic Knowledge Visualization System (HKVS)

- Logical Validation and Data Mining Engine (LVDME)

These subsystems operate as a closed-loop hybrid intelligence architecture.

4. Cognitive Interface System (CIS)

The Cognitive Interface System enables the user to control and interact with the AI environment using natural biological signals.

Components

Augmented Reality Optical Interface

Resolution target

4K per eye

Field of view

120° immersive AR

Refresh rate

120 Hz

Optical engine

MicroLED waveguide projection

Capabilities

• multi-layer data visualization

• interactive holographic displays

• spatial model interaction

• multi-agent visualization

Digital Iris Tracking

High-precision eye-tracking sensors embedded in the visor.

Tracking resolution

<0.1° angular accuracy

Sampling frequency

1000 Hz

Functions

• target selection

• focus detection

• command confirmation

• cognitive attention inference

Voice Command Interface

AI-assisted natural language interaction.

Recognition latency

<50 ms

Languages supported

multi-language neural models

Command modes

• conversational command

• directive command

• analytical query

Gesture Interface

Integrated inertial sensors in forearm modules detect hand and arm movement.

Recognition accuracy target

98%

Functions

• object manipulation in AR space

• menu navigation

• collaborative modeling interaction

5. Wearable Exoskeletal Hardware Platform (WEHP)

The WEHP provides the mechanical structure supporting computational hardware, sensors, and power systems.

Structural Design

Material

carbon fiber reinforced polymer

titanium joint connectors

Weight target

6–9 kg

Load distribution

spine-anchored exoskeletal frame

Ventilation

active thermal channels

The exoskeleton is not designed for strength amplification, but for computational integration and ergonomic stability.

6. Distributed Edge Processing Network (DEPN)

The BioSuit integrates multiple embedded computing nodes distributed across the exoskeleton.

Purpose

• reduce latency

• perform real-time signal processing

• support AR rendering

• preprocess data for cloud transmission

Processing Architecture

Central Processing Node (CPN)

Location

chest module

Processing power target

80–120 TOPS

Functions

• AI interface coordination

• system resource management

• edge inference operations

Secondary Processing Nodes

Locations

• shoulders

• forearms

• spine module

Each node includes

• AI accelerator chip

• sensor fusion module

• local memory

Processing capacity per node

20–40 TOPS

Total Edge Processing Target

Aggregate compute capacity

200–300 TOPS

7. AI Coprocessing Cloud (AICC)

The Human-X system connects to a distributed AI cloud network composed of specialized AI agents.

Baseline architecture

minimum 1,000 domain-specific AI models

Each AI represents a knowledge specialization domain.

Examples

astrophysics

climate science

economics

materials engineering

medicine

systems engineering

machine learning research

AI Assembly Coordination System

The cloud network dynamically organizes AI agents into temporary analytical councils.

These councils perform:

• collaborative analysis

• cross-disciplinary modeling

• hypothesis generation

• scenario simulations

The structure functions as a digital research assembly or scientific agora.

8. Holographic Knowledge Visualization System (HKVS)

The HKVS presents AI analysis results as interactive holographic structures within the AR environment.

Visualization formats include:

• 3D system models

• simulation outputs

• knowledge graphs

• comparative hypothesis maps

The user can interact with these objects in real time.

9. Logical Validation and Data Mining Engine (LVDME)

A central component of Human-X is the scientific validation engine.

This system continuously evaluates hypotheses using:

• statistical analysis

• probabilistic modeling

• historical dataset comparison

• logical consistency testing

Contradictory or unsupported models are flagged or eliminated.

This process produces iteratively optimized conclusions.

10. Data Acquisition Network

The Human-X system accesses global knowledge sources.

Examples

scientific publication databases

satellite data networks

financial data streams

climate monitoring systems

engineering datasets

government open data platforms

The system continuously performs automated knowledge ingestion and indexing.

11. Communication Infrastructure

Primary communication channel

5G / future 6G networks

Backup communication

low-orbit satellite networks

Data encryption

quantum-resistant cryptographic protocols

Latency target

<30 ms for AI cloud interaction.

12. Power System

Energy source

high-density lithium solid-state batteries

Battery capacity

600–800 Wh

Operational endurance

3–4 hours continuous operation

Hot-swap battery modules supported.

13. Thermal Management

Distributed heat dissipation system.

Components

• heat pipes integrated in frame

• micro-fan cooling modules

• graphene heat spreaders

Maximum operating temperature

<45°C external surface

14. Cybersecurity Architecture

Human-X incorporates multiple layers of security.

Security layers

• encrypted communication channels

• secure hardware enclaves

• AI model authentication

• biometric identity verification

Threat model includes

• AI manipulation

• data poisoning

• command spoofing

15. Operational Workflow

Step 1

User formulates analytical objective.

Step 2

AI network decomposes the problem.

Step 3

Domain-specific AI agents analyze subcomponents.

Step 4

Data mining engine retrieves relevant datasets.

Step 5

Simulation models are generated.

Step 6

Logical validation engine filters contradictions.

Step 7

Results are presented through AR visualization.

Step 8

User performs final decision synthesis.

16. Performance Targets

| Parameter | Target |

|---|---|

| AR latency | <10 ms |

| AI response time | <2 seconds |

| Edge compute | 200–300 TOPS |

| AI agents | ≥1000 |

| Data throughput | >5 Gbps |

| Battery life | 3–4 hours |

17. Technology Readiness Level (TRL)

Current feasibility estimate

TRL 3–4

Proof-of-concept architecture possible with current technologies.

Required advances

• ultra-light edge AI chips

• improved AR optics

• distributed AI orchestration frameworks

• wearable power systems

Expected TRL progression

Prototype stage

3–5 years

Operational system

7–10 years

18. Strategic Impact

The Human-X architecture introduces a new technological category:

Hybrid Human–AI Cognitive Systems

Potential long-term impacts include

• acceleration of scientific discovery

• improved strategic decision making

• enhanced problem-solving capacity for complex global challenges

The system could redefine the human role in high-complexity knowledge environments.

Human-X Beta BioSuit

Startup Roadmap, Investment Model & Hardware Architecture

SpaceArch Advanced Hybrid Intelligence Systems

1. Strategic Vision

The Human-X Beta BioSuit represents the emergence of a new technological sector:

Human–AI Hybrid Cognitive Augmentation Systems

This sector integrates:

- wearable computing

- augmented reality

- distributed AI coprocessing

- edge AI hardware

- neuro-adaptive interfaces

The Human-X platform transforms a human operator into a Hybrid Cognitive Command Node, capable of coordinating thousands of AI systems in real time.

The long-term objective is to establish SpaceArch as a global pioneer in human-AI augmentation technologies, similar to how:

- Apple created the personal computer ecosystem

- Tesla pioneered electric mobility platforms

- NVIDIA built the AI computing infrastructure

Human-X would define the human-AI cognitive interface industry.

2. Market Opportunity

The BioSuit concept intersects several high-growth markets.

| Sector | Market Size |

|---|---|

| AR / XR systems | $300B |

| AI infrastructure | $2T |

| Wearable computing | $150B |

| Defense technology | $700B |

| Space exploration | $1T+ |

Potential addressable market:

$3–5 trillion over the next two decades.

Early adopters are expected to be:

- defense agencies

- aerospace organizations

- advanced research labs

- engineering corporations

- AI research centers

Later expansion includes enterprise and professional markets.

3. Product Roadmap

Phase 1 — Software Prototype (Year 1)

Objective

Develop the AI Copilot + AR interface without full suit hardware.

Components:

- AR headset integration

- AI orchestration software

- multi-AI collaboration interface

- holographic knowledge dashboards

Hardware platform

Existing XR headsets.

Estimated budget

$5–8M

Outcome

Proof-of-concept hybrid intelligence interface.

Phase 2 — Beta Wearable System (Years 2-3)

Development of the first BioSuit prototype.

Components:

- lightweight exoskeletal frame

- distributed edge processors

- gesture control modules

- advanced AR visor

Prototype units

10–20 suits.

Estimated budget

$30–50M

Outcome

Operational Human-AI hybrid workstation.

Phase 3 — Alpha Production Platform (Years 4-5)

Industrialization of the system.

Develop:

- custom AI chips

- optimized power systems

- full holographic AI assembly interface

- secure cloud infrastructure

Production

500–1000 units.

Estimated budget

$120–200M

Target customers

- research institutions

- aerospace agencies

- advanced engineering firms.

Phase 4 — Mass Market Expansion (Years 6-10)

Development of Human-X Lite.

Features

- simplified AR interface

- smaller AI network

- enterprise productivity tools.

Potential markets

- engineers

- scientists

- analysts

- surgeons

- architects.

Projected unit production

100,000+ systems.

4. Investment Model

Seed Round

Goal

Develop AI orchestration software.

Capital required

$10M

Use of funds

- software architecture

- AI integration

- early prototypes.

Valuation estimate

$40M pre-money.

Series A

Goal

Build full BioSuit prototype.

Capital required

$50–70M

Use of funds

- wearable hardware engineering

- distributed edge computing

- advanced AR interface.

Estimated valuation

$250–400M.

Series B

Goal

Industrial production.

Capital required

$200–300M

Use of funds

- manufacturing infrastructure

- custom AI hardware

- cloud AI platform.

Estimated valuation

$1–2B.

5. Revenue Model

Revenue streams include:

Hardware Sales

Human-X BioSuit units.

Estimated price

$50,000 – $120,000 per unit.

AI Cloud Subscription

Monthly subscription to AI coprocessing network.

Enterprise plan

$1,000 – $5,000 / month per user.

Domain AI Modules

Specialized AI packages.

Examples

- climate modeling

- engineering design

- biotech research

Subscription

$200–$1000 per module.

Data Intelligence Services

Large corporations may use the system for strategic analysis.

Consulting + licensing.

6. Competitive Landscape

No direct equivalent currently exists.

Closest technologies:

| Company | Area |

|---|---|

| Microsoft | AR systems |

| Apple | spatial computing |

| NVIDIA | AI hardware |

| Palantir | data intelligence |

| Neuralink | neural interfaces |

Human-X combines aspects of all these sectors.

7. Hardware Architecture Diagram (Conceptual)

Below is the conceptual architecture of the BioSuit system.

CLOUD AI NETWORK

(1000+ Specialized AIs)

|

|

-----------------------

| AI ORCHESTRATION HUB |

-----------------------

|

Secure Data Link

|

EDGE PROCESSING

(Distributed AI Chips)

|

-----------------------------------------

| BIO-SUIT SYSTEM |

-----------------------------------------

| |

| AR VISOR + HOLOGRAPHIC DISPLAY |

| |

| IRIS TRACKING SENSORS |

| |

| VOICE / GESTURE CONTROL |

| |

| EXOSKELETON FRAME |

| |

| EDGE PROCESSING MODULES |

| |

| BATTERY SYSTEM |

| |

-----------------------------------------

|

|

HUMAN OPERATOR

(Biological Brain)

|

|

HYBRID COGNITIVE SYSTEM

8. Hardware Component Map

Head Module

Components

- AR optical engine

- iris tracking cameras

- audio interface

- neural inference processors

Function

Primary human-AI interaction interface.

Chest Module

Components

- central edge processor

- communication hub

- AI coprocessing controller

Function

Suit computational coordination.

Shoulder Modules

Components

- AI accelerator chips

- motion sensors

Function

Gesture detection and sensor fusion.

Forearm Modules

Components

- gesture interface

- tactile feedback actuators

Function

interaction with holographic objects.

Spine Module

Components

- power system

- cooling network

- data routing backbone.

9. System Performance Targets

| Parameter | Target |

|---|---|

| Edge AI compute | 250–300 TOPS |

| AI cloud nodes | 1000+ |

| Data bandwidth | 5–10 Gbps |

| AR latency | <10 ms |

| Battery life | 3–4 hours |

| System weight | <9 kg |

10. Strategic Significance

Human-X could become one of the first practical human-AI hybrid cognitive systems.

Potential impact:

- accelerate scientific discovery

- enable superhuman analytical capacity

- redefine professional productivity

- create a new class of AI-enhanced professionals.

Long term, systems like Human-X may become the standard interface between humans and artificial intelligence ecosystems.

11. Strategic Fit with our Vision

Your previous work around:

- AGI hybridization

- external digital neocortex

- Human-X concept

- AI neural networks

- multidisciplinary AI assemblies

aligns directly with this architecture.

Human-X is essentially the physical interface layer of the Hybrid Intelligence Civilization model we have been describing.

Human-X Beta BioSuit

Dual-Market Strategy (Startup + Defense + NASA)

Prototype Cost Model & DARPA-Style Budget Framework

SpaceArch Advanced Hybrid Intelligence Systems

1. Strategic Positioning

The Human-X Beta BioSuit should not be developed as a conventional consumer technology startup.

The optimal commercialization path is a dual-market strategy, combining:

1️⃣ Defense & strategic research sector

2️⃣ Civilian scientific and industrial sector

This approach mirrors the historical development of many foundational technologies:

| Technology | Initial Market | Civilian Expansion |

|---|---|---|

| Internet | DARPA | Global commercial internet |

| GPS | US DoD | Civil navigation |

| Drones | Military | Logistics & photography |

| Space launch | NASA/DoD | SpaceX commercial space |

The Human-X system follows the same pattern:

high-complexity strategic systems first → professional markets later → long-term mass adoption.

2. Market Segmentation

2.1 Defense / Strategic Research Market

Primary buyers:

• DARPA

• US Department of Defense

• NATO research agencies

• advanced military R&D divisions

• intelligence analysis centers

Use Cases

Strategic decision analysis

Hybrid human-AI systems capable of analyzing massive geopolitical datasets.

Mission planning

Rapid modeling of complex operational scenarios.

Scientific research acceleration

Multidisciplinary problem solving for advanced defense technologies.

Space defense operations

Integration with orbital monitoring and space situational awareness.

2.2 NASA / Space Agency Market

Potential clients:

• NASA

• ESA

• JAXA

• private space companies

Applications

Astronaut cognitive augmentation

Support for mission planning and spacecraft diagnostics.

Scientific data analysis

Processing planetary science and astrophysics datasets.

Deep space missions

Hybrid human-AI research environments for long-duration exploration.

Planetary colonization planning

Modeling life-support systems and habitat engineering.

2.3 Industrial & Research Sector

Target organizations:

• advanced engineering firms

• energy research labs

• biotech companies

• climate science institutes

• aerospace manufacturers

Applications

• complex system modeling

• industrial design optimization

• large-scale environmental analysis

• materials science research.

3. Commercial Strategy

Phase 1 — Strategic Demonstrator

Objective:

Develop a functional prototype demonstrating hybrid human-AI cognition.

Target partners:

• DARPA innovation programs

• NASA advanced systems divisions

• university research laboratories.

Revenue model:

government research grants + technology demonstration contracts.

Phase 2 — Professional Systems

Develop Human-X Research Edition.

Target clients:

• national laboratories

• aerospace companies

• scientific research institutions.

Revenue model:

hardware sales + AI cloud subscription.

Phase 3 — Enterprise Systems

Develop Human-X Professional Edition.

Target clients:

• engineers

• architects

• financial analysts

• strategic consulting firms.

Revenue model:

enterprise productivity platform.

4. Institutional Funding Opportunities

Several programs are aligned with Human-X.

DARPA

Programs related to:

• human-machine teaming

• augmented cognition

• advanced AI collaboration.

Funding range:

$5M – $50M per program.

NASA

Relevant initiatives:

• advanced astronaut tools

• deep space mission support systems

• digital astronaut research.

Funding range:

$3M – $30M.

NSF / Science Agencies

Research areas:

• human-AI collaboration

• augmented intelligence systems.

Funding range:

$2M – $15M.

5. Revenue Potential

Projected early clients:

| Client Type | Units | Price |

|---|---|---|

| Defense labs | 50 | $120k |

| NASA programs | 30 | $120k |

| Research universities | 100 | $70k |

Potential early market revenue:

$15M – $25M

Before large-scale commercialization.

6. Prototype Development Cost Model

(DARPA-Style Budget)

Phase 1 — Concept Demonstrator

Duration

12 months

Goal

AI orchestration software + AR interface.

Budget

| Category | Cost |

|---|---|

| Software engineering | $2.5M |

| AI architecture | $1.5M |

| AR interface development | $1.2M |

| Data infrastructure | $800k |

| Testing | $500k |

Total

$6.5M

Phase 2 — Hardware Prototype

Duration

24 months

Goal

first wearable BioSuit prototype.

Budget

| Category | Cost |

|---|---|

| Exoskeleton engineering | $8M |

| Edge AI computing hardware | $6M |

| AR optical system | $5M |

| Power system development | $3M |

| Sensors & biometric interface | $4M |

| Integration testing | $4M |

Total

$30M

Phase 3 — Beta Testing

Duration

12 months

Goal

operational testing.

Budget

| Category | Cost |

|---|---|

| Prototype manufacturing | $6M |

| Field testing | $4M |

| Software refinement | $5M |

| Cybersecurity architecture | $3M |

Total

$18M

Total Prototype Program Cost

| Phase | Cost |

|---|---|

| Phase 1 | $6.5M |

| Phase 2 | $30M |

| Phase 3 | $18M |

Total program

$54.5M

This is consistent with DARPA-scale advanced technology programs.

7. Manufacturing Cost Model

Estimated cost per prototype.

| Component | Cost |

|---|---|

| AR visor system | $4,000 |

| AI processors | $6,000 |

| exoskeleton frame | $3,000 |

| sensor systems | $2,500 |

| battery modules | $1,500 |

| cooling & electronics | $2,000 |

Manufacturing cost per unit

$19,000 – $25,000

Commercial Price

Expected price range

$80,000 – $120,000

High-margin early market.

8. Long-Term Market Evolution

Human-X could evolve into three product tiers.

Human-X Research

price

$120k+

Target

labs and defense agencies.

Human-X Professional

price

$30k – $50k.

Target

engineers, scientists, analysts.

Human-X Lite

price

$5k – $10k.

Target

advanced productivity tools.

9. Strategic Importance

The Human-X concept introduces a new technological category:

Hybrid Intelligence Platforms

The long-term impact may be comparable to:

• personal computers

• smartphones

• cloud computing.

It represents the first systematic interface between humans and large AI assemblies.

10. Strategic Fit with Your Work

This architecture aligns closely with the concepts you have been developing:

• Human-X

• hybrid intelligence systems

• AI neural networks

• external digital neocortex.

Human-X is effectively the physical operational interface for the hybrid intelligence model you have been exploring.

SpaceArch Human-X Program

Iron-Man-Level Exosuit Evolution Path & Scientific Framework of Human-AI Hybrid Cognition

PART I

SpaceArch Iron-Man-Level Exosuit Evolution Path

The Human-X BioSuit can be understood as the first stage of a long-term technological trajectory toward a fully integrated human-AI exosuit system combining:

• cognitive augmentation

• mobility enhancement

• environmental autonomy

• aerial capability

• distributed artificial intelligence coprocessing

This trajectory mirrors historical technological evolution:

| Technology | Stage 1 | Stage 2 | Stage 3 |

|---|---|---|---|

| Computers | Mainframes | Personal computers | Mobile devices |

| Aviation | Balloons | Airplanes | Hypersonic aircraft |

| Robotics | Industrial robots | Service robots | Autonomous humanoids |

Human-AI augmentation will follow a similar multi-stage evolution.

Stage 1 — Human-X Beta

Cognitive Augmentation Suit

Capabilities:

• external digital neocortex

• AR visualization

• AI coprocessing with 1000+ agents

• distributed edge computing

• gesture and voice interface

Primary role:

Hybrid cognitive workstation

Comparable historical analogy:

The first personal computer of hybrid intelligence systems.

Stage 2 — Human-X Gamma

Neuro-Adaptive Suit

Enhancements:

• neural interface sensors (EEG + EMG)

• cognitive intention detection

• predictive AI assistance

• brain-state monitoring

Capabilities:

• faster command execution

• partial thought-to-action control

• adaptive AI behavior

This stage introduces true human-machine cognitive coupling.

Stage 3 — Human-X Delta

Advanced Robotic Exoskeleton

Enhancements:

• powered exoskeleton actuators

• strength amplification

• enhanced endurance

• load-bearing support

Capabilities:

• carrying heavy equipment

• extreme environment mobility

• disaster response operations

Applications:

• construction

• emergency response

• space missions.

Stage 4 — Human-X AeroSuit

Personal Flight Exosuit

Integration with aerial propulsion systems.

Possible propulsion technologies:

• ducted electric fans

• micro-turbine jets

• hybrid drone propulsion

Capabilities:

• vertical takeoff and landing (VTOL)

• autonomous stabilization

• urban aerial mobility

Key systems:

• thrust-vectoring control

• AI flight stabilization

• collision avoidance sensors.

Stage 5 — Human-X OmniSuit

Full Environmental Autonomy System

Capabilities:

• atmospheric flight

• underwater operation

• extreme climate protection

• long endurance energy systems

Possible technologies:

• hydrogen fuel cells

• next-generation batteries

• graphene structural materials.

Long-Term Vision

A fully developed system could combine:

• hybrid intelligence

• robotic augmentation

• aerial mobility

• environmental protection

into a personal technological ecosystem.

This concept represents the emergence of human-machine symbiotic systems.

PART II

Scientific Framework of Human-AI Hybrid Cognition

Abstract

This paper proposes a theoretical framework describing the emergence of Hybrid Cognitive Systems (HCS) created through real-time integration between biological intelligence and artificial intelligence networks.

The framework introduces the concept of the External Digital Neocortex (EDN) and describes how distributed artificial intelligence can function as an extension of human cognitive architecture.

Hybrid cognition represents a new class of intelligence system in which biological reasoning and machine analysis operate as a unified problem-solving entity.

1. Introduction

Human intelligence evolved to process information through a biological neural network consisting of approximately 86 billion neurons.

Despite its remarkable capabilities, biological cognition has inherent limitations:

• limited working memory

• slow numerical processing

• restricted parallel analysis capacity

• bounded access to global information

Artificial intelligence systems, by contrast, exhibit strengths in:

• large-scale data processing

• pattern recognition across massive datasets

• rapid simulation and modeling.

The integration of these two systems creates the possibility of Hybrid Intelligence, where biological cognition and artificial computation complement each other.

2. The External Digital Neocortex

The External Digital Neocortex (EDN) is defined as a distributed artificial cognitive layer connected to a human user through real-time interfaces.

This architecture mirrors the functional role of the biological neocortex:

| Biological Neocortex | External Digital Neocortex |

|---|---|

| Pattern recognition | AI machine learning |

| Abstract reasoning | simulation engines |

| Language processing | natural language models |

| Knowledge integration | global data networks |

The EDN extends human cognition beyond biological limitations.

3. Human-AI Coprocessing Model

Hybrid cognition operates through cognitive coprocessing.

Workflow:

1 Human formulates problem or hypothesis

2 AI network decomposes problem structure

3 specialized AI agents analyze subdomains

4 results are synthesized into coherent models

5 human evaluates final conclusions.

This process creates a distributed cognitive system.

4. AI Knowledge Assembly Model

In the Human-X architecture, the AI network operates as a virtual scientific council composed of domain-specific intelligence agents.

This structure resembles historical models of collective reasoning:

• scientific peer review

• academic conferences

• expert panels

However, AI agents operate orders of magnitude faster.

5. Contradiction Elimination and Logical Filtering

A key function of hybrid cognition is logical contradiction filtering.

The AI network performs:

• cross-model comparison

• probabilistic validation

• data-consistency checks

Models that violate scientific constraints are eliminated.

This process produces progressively optimized analytical outcomes.

6. Hybrid Intelligence Equation

The conceptual structure of hybrid cognition can be represented as:

Hybrid Intelligence =

Human intuition

+

AI computational analysis

+

distributed data mining

+

logical validation systems.

This combination creates an intelligence architecture capable of solving problems beyond the capacity of either system independently.

7. Cognitive Bandwidth Expansion

Human cognitive bandwidth is limited by:

• working memory capacity

• attention constraints

• serial reasoning processes.

Hybrid cognition expands bandwidth by delegating large analytical tasks to AI systems while preserving human strategic judgment.

8. Human Role in Hybrid Systems

The human component remains essential for:

• ethical judgment

• creative insight

• strategic decision making

• hypothesis generation.

Artificial intelligence performs analytical tasks but does not replace human agency.

9. Applications

Hybrid cognition systems may transform multiple fields:

Scientific research

Acceleration of discovery.

Climate science

Complex planetary modeling.

Medicine

Large-scale biomedical data analysis.

Engineering

Design optimization.

Strategic governance

Complex decision support systems.

10. Civilizational Implications

The emergence of hybrid intelligence may represent a major evolutionary transition in human technological development.

Potential consequences include:

• exponential acceleration of knowledge production

• new forms of collaborative intelligence

• transformation of professional roles.

Hybrid intelligence may eventually become a standard interface between humans and advanced computational systems.

11. Conclusion

The Human-X architecture represents an early prototype of Hybrid Cognitive Systems.

By integrating biological intelligence with distributed artificial intelligence networks, it becomes possible to create a new class of cognitive entities capable of addressing complex global challenges.

The development of such systems will likely define a new technological frontier in the relationship between humans and machines.

SpaceArch AeroSuit X-1

Unified Architecture: Human-X BioSuit + Drone Exosuit Personal Flight System

1. System Concept

The SpaceArch AeroSuit X-1 is a next-generation hybrid human-AI aerial exosuit system that integrates:

- Human-X cognitive augmentation architecture

- AI copiloted flight stabilization systems

- Drone-class electric propulsion modules

- augmented reality flight interface

The objective is to create a portable personal flight platform in which a human operator acts as the strategic controller, while an AI copilot performs real-time stabilization, navigation, and safety management.

Unlike early jet suits or experimental personal flight systems, AeroSuit X-1 is designed around the principle of hybrid cognition-assisted flight.

This means the human pilot does not manually control thrust in real time; instead, the system uses AI stabilization and autopilot logic, similar to modern drones and fighter aircraft fly-by-wire systems.

2. Core System Philosophy

The AeroSuit concept relies on three complementary intelligence layers:

1. Human Pilot

Strategic decision making.

2. AI Copilot

Flight stabilization, obstacle detection, thrust balancing.

3. Distributed AI Knowledge Network

Weather analysis, route optimization, mission planning.

This architecture transforms the pilot into a hybrid human-AI flight unit.

3. System Architecture Overview

The AeroSuit X-1 integrates five primary subsystems:

- Human-X Cognitive Interface

- Drone Propulsion Platform

- Flight Stabilization AI

- Environmental Awareness Sensors

- Energy and Power System

These operate through a unified AI flight control architecture.

4. Human-X Cognitive Interface

The pilot interacts with the system through the Human-X BioSuit interface.

AR Flight Visor

Provides real-time visualization of:

- flight altitude

- speed

- thrust balance

- navigation map

- hazard detection alerts

The interface functions similarly to fighter jet helmet displays.

Digital Iris Control

Eye tracking allows the pilot to select commands.

Examples:

Look at a waypoint → confirm navigation target.

Look at landing zone → initiate landing sequence.

Voice Command Interface

Examples:

“Ascend 30 meters”

“Return to base”

“Hover mode”

Commands are interpreted by the AI copilot.

5. Drone Propulsion Architecture

The propulsion system uses high-efficiency electric ducted fan modules.

Possible configurations:

Quad Rotor System

4 propulsion units mounted:

- two shoulder mounts

- two backpack mounts

Advantages:

- simplicity

- stable control

- redundancy.

Hexa Rotor System

6 propulsion units distributed around the body.

Advantages:

- greater stability

- improved thrust distribution.

Thrust Requirements

Estimated total thrust required:

| Parameter | Value |

|---|---|

| Pilot + suit mass | 100 kg |

| Safety margin | 1.5× |

| Required thrust | 150 kgf |

Each rotor must generate approximately:

25–40 kgf thrust depending on configuration.

6. Flight Stabilization AI

Manual control of personal flight systems is extremely difficult.

Therefore AeroSuit uses AI stabilization algorithms derived from drone flight systems.

The AI performs:

- thrust balancing

- automatic attitude correction

- turbulence compensation

- obstacle avoidance.

This allows the pilot to focus on navigation and mission objectives.

7. Environmental Sensor Suite

The suit includes multiple sensors for situational awareness.

Sensors

- LiDAR scanners

- stereo cameras

- radar proximity sensors

- GPS + inertial navigation

- atmospheric sensors.

These sensors feed data to the AI flight controller.

8. Energy System

Energy density is the primary limitation of personal flight systems.

Battery System

High-density lithium battery packs.

Estimated capacity:

6–8 kWh

Estimated endurance:

| Flight Mode | Duration |

|---|---|

| Hover | 15–20 min |

| Cruise | 20–30 min |

| Energy-efficient glide | 30–40 min |

Future technologies could extend endurance:

- hydrogen fuel cells

- hybrid turbine generators.

9. Structural Design

The AeroSuit structure includes:

- carbon fiber exoskeleton

- reinforced propulsion mounts

- vibration dampers

- aerodynamic control surfaces.

The design must balance strength, weight, and thermal management.

10. Safety Systems

Safety is the most critical requirement.

Emergency Parachute

Automatic deployment if altitude >30 meters.

Rotor Redundancy

The system can remain airborne even if one rotor fails.

AI Safety Mode

If the pilot becomes incapacitated, the AI initiates:

- automatic landing

- return-to-base protocol.

11. Performance Targets

| Parameter | Target |

|---|---|

| Maximum speed | 120 km/h |

| Operational altitude | 300–1000 m |

| Endurance | 20–30 min |

| Total system weight | <40 kg |

| Maximum payload | 120 kg pilot mass |

12. Potential Applications

Emergency Response

Rapid access to disaster areas.

Urban Mobility

Short-distance aerial transportation.

Military Reconnaissance

Stealth mobility.

Industrial Inspection

Inspection of infrastructure and energy systems.

Extreme Sports

Personal aerial mobility experiences.

13. Development Roadmap

Phase 1 — AI Flight System Prototype

Develop stabilization software.

Duration

12 months.

Phase 2 — Ground Test Platform

Test propulsion and control systems.

Duration

18 months.

Phase 3 — First Flight Prototype

Human test flights.

Duration

2–3 years.

14. Estimated Development Cost

| Development Stage | Budget |

|---|---|

| AI flight control software | $5M |

| Propulsion R&D | $15M |

| Structural engineering | $8M |

| Prototyping | $10M |

| Testing | $7M |

Total development budget

$45M

15. Strategic Significance

The AeroSuit X-1 concept represents a convergence of multiple technological revolutions:

- human-AI hybrid cognition

- drone propulsion systems

- wearable robotics

- augmented reality interfaces.

Together these systems create the possibility of AI-assisted personal aerial mobility.

The long-term impact could be comparable to the introduction of:

- automobiles

- helicopters

- personal computers.

16. Strategic Fit with SpaceArch

The AeroSuit platform integrates multiple technologies already present in the SpaceArch innovation ecosystem:

• Human-X hybrid intelligence architecture

• drone propulsion research

• AI distributed systems

• augmented reality interfaces.

This convergence creates a potential flagship project capable of positioning SpaceArch as a pioneer in human-AI mobility technologies.

AeroSuit X-1 with magnetic solar-energy satellite charging corridors (LaserSat integration).

Human-X BioSuit

Realistic Technology Logistics Plan (2026–2036)

The Human-X BioSuit concept does not require speculative science fiction technology. The core idea is to assemble and integrate technologies that already exist, combined with several components currently under rapid development.

The system evolves progressively from a cognitive augmentation suit toward a full hybrid human-AI mobility platform.

This roadmap describes a credible development timeline from 2026 to 2036.

Phase 0 — System Pre-Assembly

Year: 2026

Goal:

Create the first functional Human-X BioSuit prototype (non-flight version).

Architecture:

Human operator

+

BioSuit wearable interface

+

AI copilot system

+

biometric sensors

+

AR interface

The first version focuses on cognitive augmentation rather than mobility.

Technologies Already Available

Smart Textile BioSuit

Wearable computational fabrics already exist.

Capabilities include:

• heart-rate monitoring

• respiration monitoring

• posture tracking

• muscle activity sensing (EMG)

• temperature and hydration tracking

Companies working on this:

• Hexoskin

• Sensoria

• Myant

• MIT Media Lab

Technology readiness level:

TRL 7–8

Meaning: close to commercial deployment.

Haptic Gloves

Haptic gloves are already commercially available.

Example:

SenseGlove Nova

Capabilities:

• finger tracking

• tactile feedback

• force feedback

• gesture control

These devices allow precise interaction with virtual interfaces and AI systems.

TRL:

7–8

AR / XR Glasses

Mixed-reality systems are already operational.

Examples:

• Apple Vision ecosystem

• Meta AR prototypes

• Magic Leap

• Microsoft HoloLens

Capabilities:

• real-time spatial mapping

• digital overlays on the physical world

• gesture interaction

• AI assistant integration

TRL:

7–9

AI Copilot System

Already widely available.

Components include:

• large language models

• multimodal AI

• navigation and data analysis copilots

• engineering and research assistants

This becomes the cognitive engine of the BioSuit.

TRL:

7–9

Result of Phase 0 (2026)

The first Human-X prototype becomes a wearable cognitive augmentation platform.

Use cases include:

• engineering analysis

• scientific research

• drone operation

• robotics tele-control

• decision support

• AI-assisted knowledge processing

No flight capability yet.

Phase I — Cognitive Augmentation Suit

2027–2029

Goal:

Create a true external digital neuro-cortex.

Architecture:

Human brain

→ bioelectrical signals

→ AI coprocessor

→ distributed AI cloud

The system converts human intention into machine-readable commands.

Neural Intent Detection

Currently under development.

Examples:

• Neuralink

• OpenBCI

• NextMind

Capabilities:

• detect neural signals

• interpret cognitive intention

• translate brain signals into digital commands

Estimated maturity:

2028

TRL:

5–6 today.

Gesture Neural Interface

EMG-based control systems are advancing rapidly.

Components include:

• muscle sensors

• motion tracking

• machine-learning interpretation

Accuracy levels already exceed 99% gesture recognition in controlled environments.

TRL:

6–7

Distributed AI Cognitive Network

Human-X connects to a large distributed network of specialized AI systems.

Concept:

AI Assembly

or

Cognitive Agora

Example configuration:

1000 specialized AI agents with post-doctoral level knowledge.

Functions:

• scientific analysis

• data mining

• logical contradiction filtering

• optimization modeling

This transforms the suit into a hybrid human-AI reasoning platform.

Result of Phase I

Human-X becomes a portable digital exocortex.

Capabilities include:

• complex scientific reasoning

• real-time data synthesis

• multi-disciplinary problem solving

• collaborative AI analysis

Phase II — Soft Exoskeleton Mobility

2028–2031

Goal:

Add physical augmentation.

Existing technologies include:

• HAL exoskeleton

• Ekso Bionics

• Sarcos robotics systems

These systems already allow:

• assisted walking

• load carrying

• rehabilitation mobility

TRL:

6–7

Soft Exoskeleton Concept

Instead of heavy robotic armor, the Human-X system uses:

textile-based exoskeletons

Components:

• artificial muscle fibers

• cable-driven actuators

• lightweight motors

Target weight:

5–10 kg

Functions

• strength amplification (2x)

• fatigue reduction

• posture stabilization

• extended mobility endurance

Phase III — Drone Exosuit Flight System

2030–2033

Goal:

Enable assisted personal flight.

Architecture:

Human-X BioSuit

+

Drone Exosuit frame

Configuration options:

• quadcopter

• hexacopter

• octocopter

Similar systems already exist experimentally:

• Jetson ONE

• multicopter personal vehicles

Main Limitation

Energy density.

Current battery technology remains the primary bottleneck.

Expected Flight Autonomy

2030 estimates:

15–25 minutes of flight.

Phase IV — Autonomous Stability AI

2032–2034

AI manages flight stabilization.

The system controls:

• balance

• wind compensation

• navigation

• energy optimization

The human operator provides high-level commands only.

Phase V — LaserSat Energy Corridors

2034–2036

This stage integrates the SpaceArch LaserSat concept.

Energy infrastructure includes:

• orbital solar satellites

• directed energy transmission

• airborne recharge corridors

Architecture:

LaserSat

↓

energy corridor

↓

AeroSuit charging system

This dramatically extends operational range.

Development Timeline Summary

| Year | Phase | Outcome |

|---|---|---|

| 2026 | Phase 0 | Cognitive BioSuit |

| 2027 | XR + AI | Digital exocortex |

| 2028 | Neural interface | intent-based control |

| 2029 | Soft exoskeleton | physical augmentation |

| 2031 | Drone exosuit | experimental flight |

| 2033 | AI stabilization | safe aerial mobility |

| 2036 | LaserSat corridors | large-scale mobility |

Estimated Prototype Budget (Phase I)

| Component | Cost |

|---|---|

| AI architecture | $2M |

| XR interface | $1M |

| smart textiles | $800k |

| haptic gloves | $300k |

| control software | $2M |

Estimated prototype cost:

$6–8 million

Technical Conclusion

The Human-X BioSuit concept does not require unknown physics or speculative technology.

Most components already exist:

• XR systems

• AI copilots

• smart fabrics

• haptic interfaces

• exoskeleton systems

The real challenge is system integration.

That integration layer is precisely the opportunity for a deep-tech startup architecture such as SpaceArch.

Human-X BioSuit Phase I – Buildable in 2026” architecture, showing exact commercial components that could already assemble the first prototype.